Intro

Couchbase Kafka Connector 1.2.0 just shipped. Along with the various bug fixes, there is new sample code for a Kafka consumer in addition to the Kafka producer that was previously available. To quickly review the terms:

- A Kafka producer writes data to Kafka, so it’s a source of messages from Kafka’s perspective.

- A consumer in Kafka terminology is a process that subscribes to topics and then does something with the feed of published messages that are emitted from a Kafka cluster. It’s basically a sink.

In this blog, you’ll get up and running with a “Hello World!”-style sample Kafka consumer that writes to Couchbase. Along the way, you’ll also get a sandbox environment with a Kafka broker and a single node Couchbase Server so that you can actually run and modify the sample consumer and producer.

Installing Prerequisites

The samples are part of the Couchbase Kafka Connector source tree. To get them, just clone the whole repository:

|

1 |

$ git clone git://github.com/couchbase/couchbase-kafka-connector.git /tmp/kafka-connector |

Now, let’s setup your testing environment using pre-configured Kafka and Couchbase Server images. You have to install Vagrant, VirtualBox, and Ansible in order to set them up locally. If you have these services installed somewhere else, make sure you adjust the host addresses throughout this guide appropriately.

|

1 |

$ cd /tmp/kafka-connector/env |

Check versions of dependencies:

|

1 2 3 |

$ ansible --version $ vboxmanage --version $ vagrant -v |

You can assign human readable names to the boxes by using the plugin for Vagrant. If you don’t already have it installed, use the following command:

|

1 |

$ vagrant plugin install vagrant-hostsupdater |

Now you’re ready to provision the servers and get running:

|

1 |

$ vagrant up |

Note: If a server fails to install due to timeouts, retry “vagrant up” after a few minutes and it may work.

Verify that the hosts are responding:

|

1 2 |

$ ping couchbase1.vagrant $ ping kafka1.vagrant |

If you navigate to you should be able to see your single-node Couchbase Server configured with credentials Administrator/password.

Building the Samples

To avoid any classpath issues, use maven to create a self-contained JAR file for each sample application.

The generator application is a minimal CLI application. It uses the Couchbase Java SDK to wrap input lines from STDIN into JSON documents and sends them to the “default” bucket on Couchbase Server:

|

1 2 |

$ cd /tmp/kafka-connector/samples/generator $ mvn assembly:assembly |

The producer attaches to Couchbase Server and transmits all mutations to Kafka. This application uses the couchbase-kafka-connector project behind the scenes.

|

1 2 |

$ cd /tmp/kafka-connector/samples/producer $ mvn assembly:assembly |

Consumer is a typical Kafka consumer, which by default just outputs any incoming message in the topic “default” to STDOUT.

|

1 2 3 |

$ cd /tmp/kafka-connector/samples/consumer $ mvn assembly:assembly |

Running the Samples

Now that you have everything prepared, it’s time to run all the samples. You’ll need three different shell sessions because each of them runs a process until stopped. We’ll assume that you are in the /tmp/kafka-connector/samples directory.

First, start your generator:

|

1 2 |

$ java -jar generator/target/kafka-samples-generator-1.0-SNAPSHOT-jar-with-dependencies.jar |

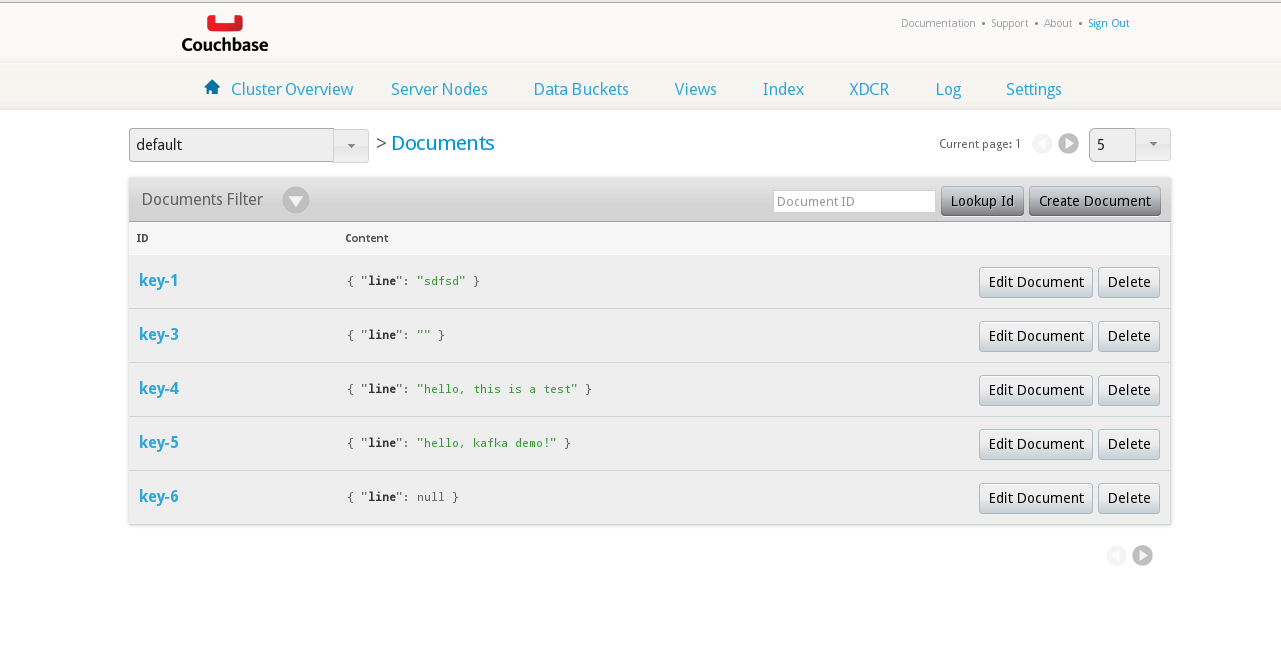

It should output the connection settings and then fall to a command prompt >. You can type anything there and verify that it’s being created properly by looking in the Couchbase Server Admin UI:

Documents from generator in the bucket

|

1 2 3 4 5 |

... INFO: Opened bucket default > hello, kafka demo! >> key=key-5, value={"line":"hello, kafka demo!"} |

At this moment you can run the connector example

|

1 |

$ java -jar producer/target/kafka-samples-producer-1.0-SNAPSHOT-jar-with-dependencies.jar |

For every line you type in the generator, you will see a line from the producer like this:

|

1 |

RECEIVED: com.couchbase.kafka.DCPEvent@4e44cb88 |

The sample writes it just before sending the payload to Kafka, in the filter class implementation. Let’s check how the Kafka receives these messages.

|

1 2 3 |

$ java -jar consumer/target/kafka-samples-consumer-1.0-SNAPSHOT-jar-with-dependencies.jar 1: {"line":"hello, kafka demo!"} 2: {"line":"hello, this is a test"} |

You can continue playing with it as long as all three services are running.

Developing with Couchbase Kafka Connector

Let’s move on and take a look at the code. All three applications are pretty friendly for experiments, for example, the generator fits in just a few lines:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

public class Example { public static void main(String args[]) throws IOException { Random random = new Random(); Cluster cluster = CouchbaseCluster.create("couchbase1.vagrant"); Bucket bucket = cluster.openBucket(); BufferedReader input = new BufferedReader(new InputStreamReader(System.in)); String line; do { System.out.print("> "); line = input.readLine(); if (line == null) { break; } String key = "key-" + random.nextInt(10); JsonObject value = JsonObject.create().put("line", line); bucket.upsert(JsonDocument.create(key, value)); System.out.printf(">> key=%s, value=%sn", key, value); } while (true); } } |

Basically, generator opens a connection to bucket “default” on your “couchbase1.vagrant” instance and writes your messages to random keys. You can extend it to send other types of events. Another thing you might want to try to do is to remove keys.

By default, Couchbase Connector for Kafka runs in server mode, where it borrows active thread and actively listens to Couchbase Server for new events. There are several points where you can apply your ideas or changes. The most obvious one is configuration builder, where you not only specify the credentials and addresses of the services you are connecting to, but you can also specify various serializer and filter classes.

The sample application implements several of them. Filter class is the simplest:

|

1 2 3 4 5 6 7 8 9 |

public class SampleFilter implements Filter { @Override public boolean pass(DCPEvent dcpEvent) { System.out.println("RECEIVED: " + dcpEvent); return true; } } |

Here you can put in any custom checks you want, and if pass() returns false, the connector discards the message and won’t send it on to Kafka.

Default Encoder, which comes with the connector distribution, tries to represent every message as JSON, but that probably is not what you need, so you can apply and conversion to DCPEvent instance and return byte array, which will be stored in Kafka. In this example, we just convert events to their string representation.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

public class SampleEncoder extends AbstractEncoder { public SampleEncoder(final VerifiableProperties properties) { super(properties); } @Override public byte[] toBytes(final DCPEvent dcpEvent) { if (dcpEvent.message() instanceof MutationMessage) { MutationMessage message = (MutationMessage) dcpEvent.message(); return message.content().toString(CharsetUtil.UTF_8).getBytes(); } else { return dcpEvent.message().toString().getBytes(); } } } |

A more advanced setting is StateSerializer interface. By implementing it, you can control how the library will track stream cursors (i.e. the sequence numbers for every partition inside Couchbase Server), and whether it will resume after connector restart. There is a Zookeeper implementation of state serializer in the distribution. Here in the sample, we’ve implemented NullStateSerializer which doesn’t persist anything, but it does show a minimal implementation.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

public class NullStateSerializer implements StateSerializer { public NullStateSerializer(final CouchbaseKafkaEnvironment environment) { } @Override public void dump(BucketStreamAggregatorState aggregatorState) { } @Override public void dump(BucketStreamAggregatorState aggregatorState, short partition) { } @Override public BucketStreamAggregatorState load(BucketStreamAggregatorState aggregatorState) { return new BucketStreamAggregatorState(aggregatorState.name()); } @Override public BucketStreamState load(BucketStreamAggregatorState aggregatorState, short partition) { return new BucketStreamState(partition, 0, 0, 0xffffffff, 0, 0xffffffff); } } |

The last component of your demo cluster is the Kafka consumer AbstractConsumer, which is a pretty typical instance of a consumer. It consists of two parts: , which implements bootstrap and positioning on the Kafka topic, and PrintConsumer, which carries your “business logic”, or just outputs every message it gets passed by AbstractConsumer:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

public class PrintConsumer extends AbstractConsumer { public PrintConsumer(String[] seedBrokers, int port) { super(seedBrokers, port); } public PrintConsumer(String seedBroker, int port) { super(seedBroker, port); } @Override public void handleMessage(long offset, byte[] bytes) { System.out.println(String.valueOf(offset) + ": " + new String(bytes)); } } |

As in the other examples here, you can play around with modifying the sample consumer. You can even close the circuit by sending everything back to Couchbase Server. Kafka is distributed software, just like Couchbase Server, so keep that in mind when running on your own cluster and adjust the main() function accordingly. In our sample, we have only a single partition, partition (0) in Kafka, so our main looks like this:

|

1 2 3 4 5 6 |

public class Example { public static void main(String args[]) { PrintConsumer example = new PrintConsumer("kafka1.vagrant", 9092); example.run("default", 0); } } |

Of course, in a production cluster you’ll be running more than one partition.

Conclusion

I hope this helps you get off to a good start with Couchbase and Kafka. Cheers!