DOCUMENTATION

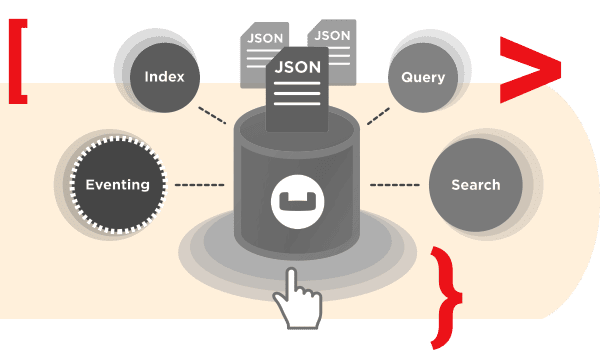

The Eventing Service abstracts away infrastructure concerns, allowing developers to focus solely on business logic to handle data changes. Typical use cases include enriching documents, cascade deletes, generating threshold-based alerts, propagating data changes inside a database, and interacting with external REST endpoints.

Make it easier for developers to quickly capture and propagate changes across business-critical applications when requirements and workflows change.

Reliably execute changing business logic at scale when there are high-velocity changes in data.

Centrally manage data-driven business logic to maintain consistency across multiple server components and client applications.

Instantly analyze and respond to data changes to use data more efficiently, make decisions faster, and avoid missed opportunities.

Familiar JavaScript programming and an interactive visual debugger make it easier for developers to build, test, and maintain data-driven business functions closer to the data.

Our highly available and performant infrastructure guarantees the execution of business logic even under heavy workloads. You can use our Multi-Dimensional Scaling (MDS) to easily resize your clusters and scale on demand.

Centrally develop, manage, and deploy data-driven business logic in a seamless environment to consolidate and lower your administrative overhead.