Tag: LLMs

Unleash Real-Time Agentic AI With Streaming Agents on Confluent Cloud and Couchbase

We’re thrilled to be partnered with Confluent today as they announce the new features for Streaming Agents on Confluent Cloud and a new Real-Time Context Engine.

Building Production-Ready AI Agents with Couchbase and Nebius AI (Webinar Recap)

This combination of LLM, plus the tools, memory and goals is what gives agents the capability to do more than just generate text.

Unlocking the Power of AWS Bedrock with Couchbase

In this blog, we explore how Couchbase’s vector store, when integrated with AWS Bedrock, creates a powerful, scalable, and cost-effective AI solution.

Introducing Model Context Protocol (MCP) Server for Couchbase

Introducing Couchbase MCP Server: an open-source solution to power AI agents and GenAI apps with real-time access to your Couchbase data.

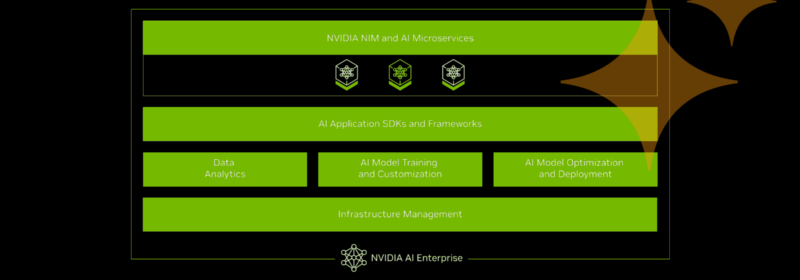

Couchbase and NVIDIA Team Up to Help Accelerate Agentic Application Development

Couchbase and NVIDIA team up to make agentic applications easier and faster to build, to feed with data, and to run.

DeepSeek Models Now Available in Capella AI Services

DeepSeek-R1 is now in Capella AI Services! Unlock advanced reasoning for enterprise AI at lower TCO. 🚀 Sign up for early access!

A Tool to Ease Your Transition From Oracle PL/SQL to Couchbase JavaScript UDF

Convert PL/SQL to JavaScript UDFs seamlessly with an AI-powered tool. Automate Oracle PL/SQL migration to Couchbase with high accuracy using ANTLR and LLMs.

Integrate Groq’s Fast LLM Inferencing With Couchbase Vector Search

Integrate Groq’s fast LLM inference with Couchbase Vector Search for efficient RAG apps. Compare its speed with OpenAI, Gemini, and Ollama.

Capella Model Service: Secure, Scalable, and OpenAI-Compatible

Capella Model Service lets you deploy secure, scalable AI models with OpenAI compatibility. Now in Private Preview!

Top Posts

- Data Analysis Methods: Qualitative vs. Quantitative Techniques

- Data Modeling Explained: Conceptual, Physical, Logical

- What are Embedding Models? An Overview

- What are Vector Embeddings?

- Application Development Life Cycle (Phases and Management Models)

- Six Types of Data Models (With Examples)

- What Is Data Analysis? Types, Methods, and Tools for Research

- Using OnDeploy in Couchbase Eventing to Gate Mutations With Pre-F...

- Capella AI Services: Build Enterprise-Grade Agents