Introducción a Kubernetes 1.4 con Spring Boot y Couchbase explica cómo empezar a utilizar Kubernetes 1.4 en Amazon Web Services. A

El servicio Couchbase se crea en el clúster y una aplicación Spring Boot almacena un documento JSON en la base de datos. Utiliza kube-up.sh de la descarga binaria de Kubernetes en github.com/kubernetes/kubernetes/releases/download/v1.4.0/kubernetes.tar.gz para iniciar el clúster. Este script es capaz de crear un clúster Kubernetes con un único maestro. Este es un defecto fundamental de las aplicaciones distribuidas en las que el maestro se convierte en un único punto de fallo.

Conozca kops - abreviatura de Kubernetes Operations.

Esta es la forma más sencilla de poner en marcha un clúster Kubernetes de alta disponibilidad. El sitio kubectl es la CLI para ejecutar comandos contra clusters en ejecución. Piensa en kops como kubectl para cluster.

Este blog mostrará cómo crear un clúster Kubernetes de alta disponibilidad en Amazon utilizando kops. Y una vez creado el cluster, creará un servicio Couchbase en él y ejecutará una aplicación Spring Boot para almacenar el documento JSON

en la base de datos.

Muchas gracias a justinsbsarahz, razic, jaygorrell, shrugs, bkpandey y otros en Canal Slack de Kubernetes ¡por ayudarme con los detalles!

Descargar kops y kubectl

- Descargar Última publicación de Kops. Este blog ha sido probado con 1.4.1 en OSX.Conjunto completo de comandos para

kopsse puede ver:

1234567891011121314151617181920212223242526272829303132333435kops-darwin-amd64 --helpkops is kubernetes ops.It allows you to create, destroy, upgrade and maintain clusters.Usage:kops [command]Available Commands:create create resourcesdelete delete clustersdescribe describe objectsedit edit itemsexport export clusters/kubecfgget list or get objectsimport import clustersrolling-update rolling update clusterssecrets Manage secrets & keystoolbox Misc infrequently used commandsupdate update clustersupgrade upgrade clustersversion Print the client version informationFlags:--alsologtostderr log to standard error as well as files--config string config file (default is $HOME/.kops.yaml)--log_backtrace_at traceLocation when logging hits line file:N, emit a stack trace (default :0)--log_dir string If non-empty, write log files in this directory--logtostderr log to standard error instead of files (default false)--name string Name of cluster--state string Location of state storage--stderrthreshold severity logs at or above this threshold go to stderr (default 2)-v, --v Level log level for V logs--vmodule moduleSpec comma-separated list of pattern=N settings for file-filtered loggingUse "kops [command] --help" for more information about a command. - Descargar

kubectl:

1curl -Lo kubectl https://storage.googleapis.com/kubernetes-release/release/v1.4.1/bin/darwin/amd64/kubectl && chmod +x kubectl - Incluya

kubectlen suSENDERO.

Crear registros Bucket y NS en Amazon

Por el momento, hay que realizar algunos ajustes, pero esperamos que se solucionen en las próximas versiones. Crear un clúster en AWS proporcionan pasos detallados y más antecedentes.

Esto es lo que siguió el blog:

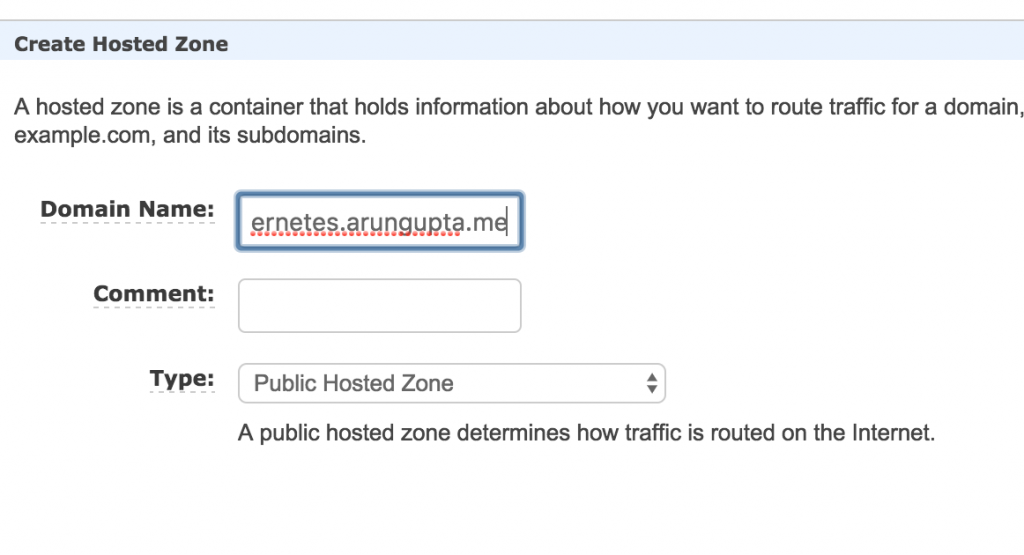

- Elija un dominio donde se alojará el clúster Kubernetes. Este blog utiliza

kubernetes.arungupta.medominio. Puede elegir un dominio de nivel superior o un subdominio. - Ruta 53 de Amazon es un servicio DNS altamente disponible y escalable. Acceda a Consola Amazon y creó un zona alojada para este dominio utilizando el servicio Route 53.

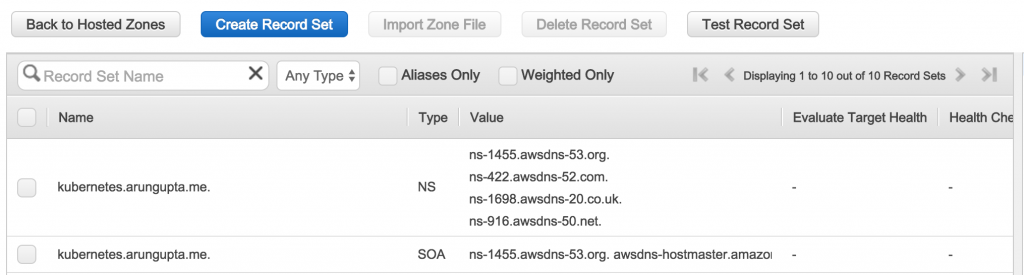

Zona creada parece:

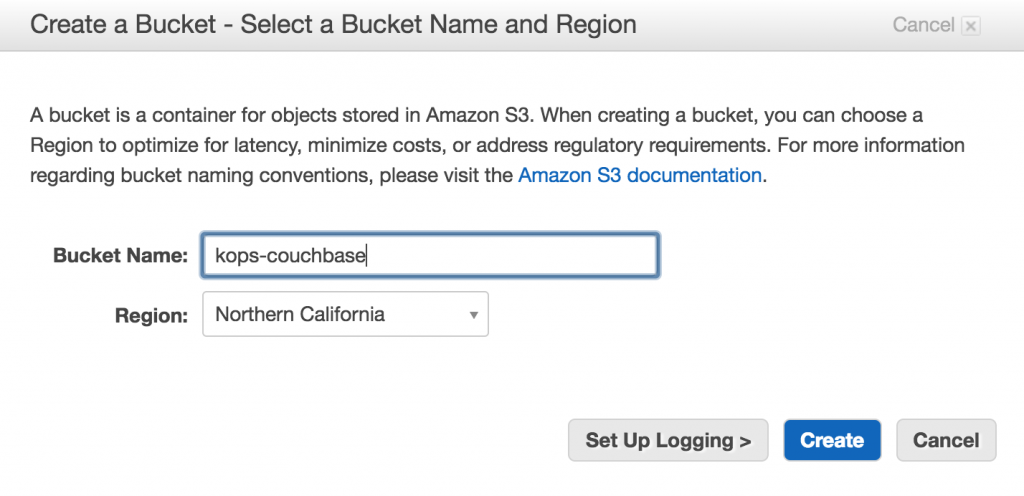

Los valores indicados en elValorson importantes, ya que se utilizarán más adelante para crear registros NS. - Crear un bucket S3 utilizando Consola Amazon para almacenar la configuración del cluster - esto se llama

tienda estatal.

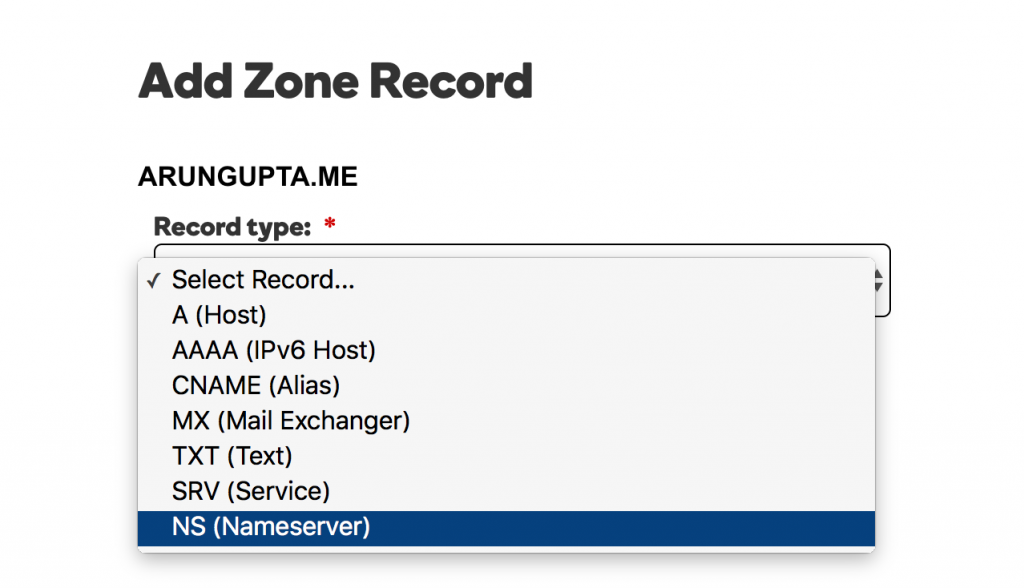

- El dominio

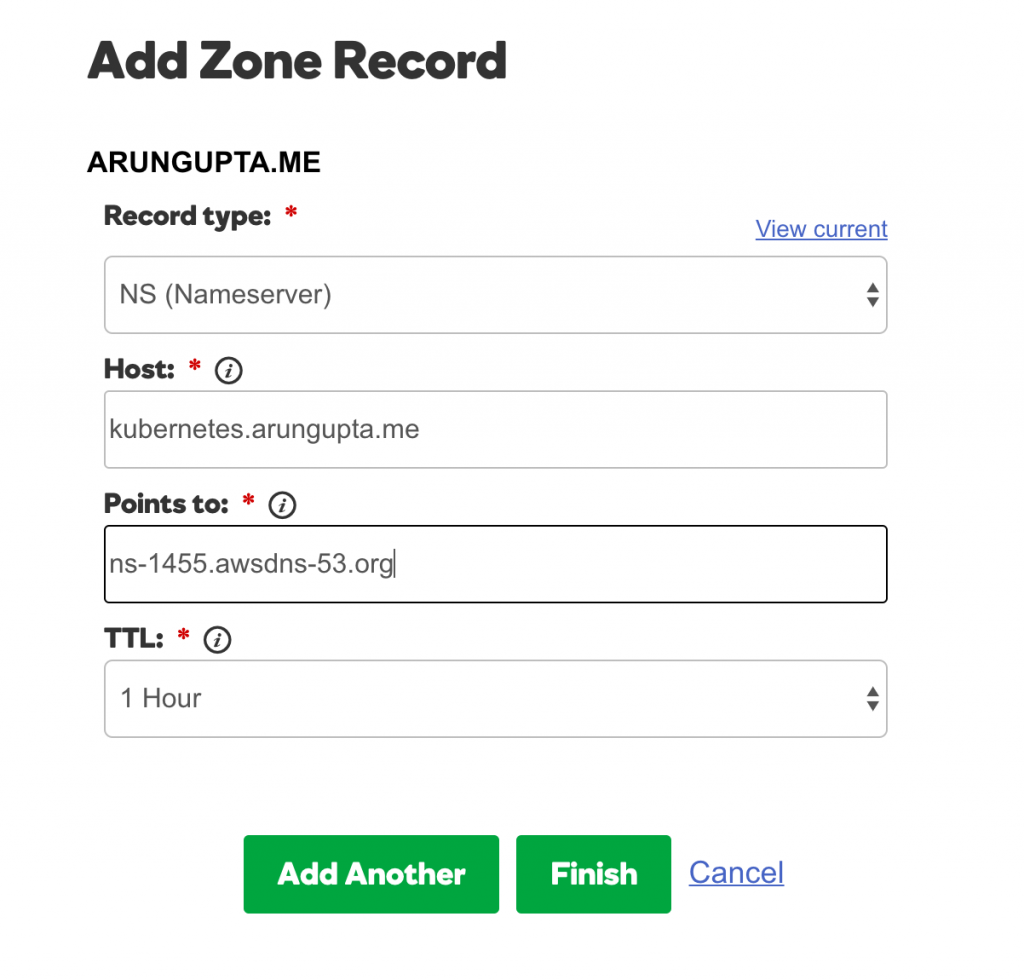

kubernetes.arungupta.meestá alojada en GoDaddy. Para cada valor mostrado en la columna Valor de la zona alojada en Route53, cree un registro NS utilizando Centro de control de dominios de GoDaddy para

Seleccione el tipo de registro:

Para cada valor, añada el registro como se muestra:

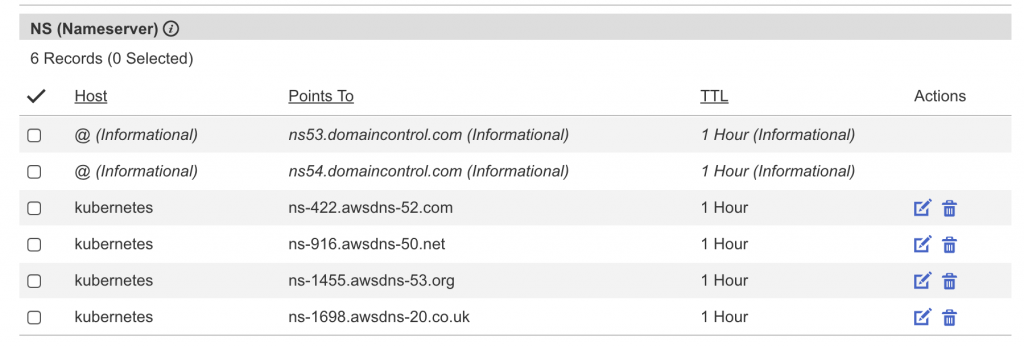

El conjunto completo de registros tiene el siguiente aspecto:

Iniciar clúster multimaster Kubernetes

Entendamos un poco las regiones y zonas de Amazon:

Amazon EC2 se hospeda en varias ubicaciones de todo el mundo. Estas ubicaciones se componen de regiones y zonas de disponibilidad. Cada región es un área geográfica independiente. Cada región tiene varias ubicaciones aisladas conocidas como zonas de disponibilidad.

Se puede crear un clúster Kubernetes de alta disponibilidad entre zonas, pero no entre regiones.

- Averigüe las zonas de disponibilidad dentro de una región:

1234567891011121314151617181920212223aws ec2 describe-availability-zones --region us-west-2{"AvailabilityZones": [{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2a"},{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2b"},{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2c"}]} - Crear un clúster multimaestro:

1kops-darwin-amd64 create cluster --name=kubernetes.arungupta.me --cloud=aws --zones=us-west-2a,us-west-2b,us-west-2c --master-size=m4.large --node-count=3 --node-size=m4.2xlarge --master-zones=us-west-2a,us-west-2b,us-west-2c --state=s3://kops-couchbase --yes

La mayoría de los interruptores se explican por sí mismos. Algunos interruptores necesitan un poco de explicación:- Especificación de varias zonas mediante

--master-zones(debe ser un número impar) crear múltiples maestros a través de AZ --cloud=awses opcional si la nube puede deducirse de las zonas--síse utiliza para especificar la creación inmediata del cluster. De lo contrario, solo se almacena el estado en el bucket y el clúster debe crearse por separado.

Se puede ver el conjunto completo de interruptores CLI:

12345678910111213141516171819202122232425262728293031323334353637383940./kops-darwin-amd64 create cluster --helpCreates a k8s cluster.Usage:kops create cluster [flags]Flags:--admin-access string Restrict access to admin endpoints (SSH, HTTPS) to this CIDR. If not set, access will not be restricted by IP.--associate-public-ip Specify --associate-public-ip=[true|false] to enable/disable association of public IP for master ASG and nodes. Default is 'true'. (default true)--channel string Channel for default versions and configuration to use (default "stable")--cloud string Cloud provider to use - gce, aws--dns-zone string DNS hosted zone to use (defaults to last two components of cluster name)--image string Image to use--kubernetes-version string Version of kubernetes to run (defaults to version in channel)--master-size string Set instance size for masters--master-zones string Zones in which to run masters (must be an odd number)--model string Models to apply (separate multiple models with commas) (default "config,proto,cloudup")--network-cidr string Set to override the default network CIDR--networking string Networking mode to use. kubenet (default), classic, external. (default "kubenet")--node-count int Set the number of nodes--node-size string Set instance size for nodes--out string Path to write any local output--project string Project to use (must be set on GCE)--ssh-public-key string SSH public key to use (default "~/.ssh/id_rsa.pub")--target string Target - direct, terraform (default "direct")--vpc string Set to use a shared VPC--yes Specify --yes to immediately create the cluster--zones string Zones in which to run the clusterGlobal Flags:--alsologtostderr log to standard error as well as files--config string config file (default is $HOME/.kops.yaml)--log_backtrace_at traceLocation when logging hits line file:N, emit a stack trace (default :0)--log_dir string If non-empty, write log files in this directory--logtostderr log to standard error instead of files (default false)--name string Name of cluster--state string Location of state storage--stderrthreshold severity logs at or above this threshold go to stderr (default 2)-v, --v Level log level for V logs--vmodule moduleSpec comma-separated list of pattern=N settings for file-filtered logging - Especificación de varias zonas mediante

- Una vez creado el clúster, obtenga más detalles sobre el clúster:

12345kubectl cluster-infoKubernetes master is running at https://api.kubernetes.arungupta.meKubeDNS is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kube-dnsTo further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

- Compruebe la versión del cliente y del servidor del clúster:

123kubectl versionClient Version: version.Info{Major:"1", Minor:"4", GitVersion:"v1.4.1", GitCommit:"33cf7b9acbb2cb7c9c72a10d6636321fb180b159", GitTreeState:"clean", BuildDate:"2016-10-10T18:19:49Z", GoVersion:"go1.7.1", Compiler:"gc", Platform:"darwin/amd64"}Server Version: version.Info{Major:"1", Minor:"4", GitVersion:"v1.4.3", GitCommit:"4957b090e9a4f6a68b4a40375408fdc74a212260", GitTreeState:"clean", BuildDate:"2016-10-16T06:20:04Z", GoVersion:"go1.6.3", Compiler:"gc", Platform:"linux/amd64"}

- Compruebe todos los nodos del clúster:

12345678kubectl get nodesNAME STATUS AGEip-172-20-111-151.us-west-2.compute.internal Ready 1hip-172-20-116-40.us-west-2.compute.internal Ready 1hip-172-20-48-41.us-west-2.compute.internal Ready 1hip-172-20-49-105.us-west-2.compute.internal Ready 1hip-172-20-80-233.us-west-2.compute.internal Ready 1hip-172-20-82-93.us-west-2.compute.internal Ready 1h

O averigua sólo los nodos maestros:

12345kubectl get nodes -l kubernetes.io/role=masterNAME STATUS AGEip-172-20-111-151.us-west-2.compute.internal Ready 1hip-172-20-48-41.us-west-2.compute.internal Ready 1hip-172-20-82-93.us-west-2.compute.internal Ready 1h - Comprueba todos los grupos:

123kops-darwin-amd64 get clusters --state=s3://kops-couchbaseNAME CLOUD ZONESkubernetes.arungupta.me aws us-west-2a,us-west-2b,us-west-2c

Complemento Kubernetes Dashboard

Por defecto, un clúster creado usando kops no tiene el panel de control UI. Pero esto se puede añadir como un complemento:

|

1 2 3 |

kubectl create -f https://raw.githubusercontent.com/kubernetes/kops/master/addons/kubernetes-dashboard/v1.4.0.yaml deployment "kubernetes-dashboard-v1.4.0" created service "kubernetes-dashboard" created |

Ahora se pueden ver todos los detalles sobre el clúster:

|

1 2 3 4 5 6 |

kubectl cluster-info Kubernetes master is running at https://api.kubernetes.arungupta.me KubeDNS is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kube-dns kubernetes-dashboard is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. |

Y el dashboard de Kubernetes UI está en la URL mostrada. En nuestro caso, es https://api.kubernetes.arungupta.me/ui y parece:

Las credenciales para acceder a este cuadro de mandos pueden obtenerse utilizando la función kubectl config vista comando. Los valores se muestran como:

|

1 2 3 4 |

- name: kubernetes.arungupta.me-basic-auth user: password: PASSWORD username: admin |

Despliegue del servicio Couchbase

Como se explica en Introducción a Kubernetes 1.4 con Spring Boot y Couchbasevamos a ejecutar un servicio Couchbase:

|

1 2 3 |

kubectl create -f ~/workspaces/kubernetes-java-sample/maven/couchbase-service.yml service "couchbase-service" created replicationcontroller "couchbase-rc" created |

Este archivo de configuración se encuentra en github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/couchbase-service.yml. Obtenga la lista de servicios:

|

1 2 3 4 |

kubectl get svc NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE couchbase-service 100.65.4.139 8091/TCP,8092/TCP,8093/TCP,11210/TCP 27s kubernetes 100.64.0.1 443/TCP 2h |

Describa el servicio:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

kubectl describe svc/couchbase-service Name: couchbase-service Namespace: default Labels: Selector: app=couchbase-rc-pod Type: ClusterIP IP: 100.65.4.139 Port: admin 8091/TCP Endpoints: 100.96.5.2:8091 Port: views 8092/TCP Endpoints: 100.96.5.2:8092 Port: query 8093/TCP Endpoints: 100.96.5.2:8093 Port: memcached 11210/TCP Endpoints: 100.96.5.2:11210 Session Affinity: None |

Coge las vainas:

|

1 2 3 |

kubectl get pods NAME READY STATUS RESTARTS AGE couchbase-rc-e35v5 1/1 Running 0 1m |

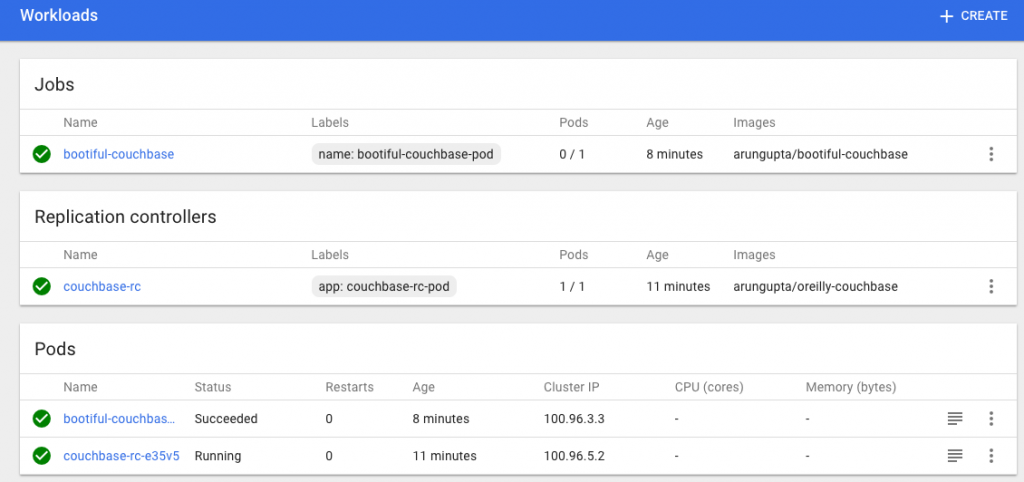

Ejecutar aplicación Spring Boot

La aplicación Spring Boot se ejecuta contra el servicio Couchbase y almacena en él un documento JSON. Inicia la aplicación Spring Boot:

|

1 2 |

kubectl create -f ~/workspaces/kubernetes-java-sample/maven/bootiful-couchbase.yml job "bootiful-couchbase" created |

Este archivo de configuración se encuentra en github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/bootiful-couchbase.yml. Ver lista de todas las vainas:

|

1 2 3 4 |

kubectl get pods --show-all NAME READY STATUS RESTARTS AGE bootiful-couchbase-ainv8 0/1 Completed 0 1m couchbase-rc-e35v5 1/1 Running 0 3m |

Compruebe los registros de la vaina completa:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

kubectl logs bootiful-couchbase-ainv8 . ____ _ __ _ _ /\ / ___'_ __ _ _(_)_ __ __ _ ( ( )___ | '_ | '_| | '_ / _` | \/ ___)| |_)| | | | | || (_| | ) ) ) ) ' |____| .__|_| |_|_| |___, | / / / / =========|_|==============|___/=/_/_/_/ :: Spring Boot :: (v1.4.0.RELEASE) 2016-11-02 18:48:56.035 INFO 7 --- [ main] org.example.webapp.Application : Starting Application v1.0-SNAPSHOT on bootiful-couchbase-ainv8 with PID 7 (/maven/bootiful-couchbase.jar started by root in /) 2016-11-02 18:48:56.040 INFO 7 --- [ main] org.example.webapp.Application : No active profile set, falling back to default profiles: default 2016-11-02 18:48:56.115 INFO 7 --- [ main] s.c.a.AnnotationConfigApplicationContext : Refreshing org.springframework.context.annotation.AnnotationConfigApplicationContext@108c4c35: startup date [Wed Nov 02 18:48:56 UTC 2016]; root of context hierarchy 2016-11-02 18:48:57.021 INFO 7 --- [ main] com.couchbase.client.core.CouchbaseCore : CouchbaseEnvironment: {sslEnabled=false, sslKeystoreFile='null', sslKeystorePassword='null', queryEnabled=false, queryPort=8093, bootstrapHttpEnabled=true, bootstrapCarrierEnabled=true, bootstrapHttpDirectPort=8091, bootstrapHttpSslPort=18091, bootstrapCarrierDirectPort=11210, bootstrapCarrierSslPort=11207, ioPoolSize=8, computationPoolSize=8, responseBufferSize=16384, requestBufferSize=16384, kvServiceEndpoints=1, viewServiceEndpoints=1, queryServiceEndpoints=1, searchServiceEndpoints=1, ioPool=NioEventLoopGroup, coreScheduler=CoreScheduler, eventBus=DefaultEventBus, packageNameAndVersion=couchbase-java-client/2.2.8 (git: 2.2.8, core: 1.2.9), dcpEnabled=false, retryStrategy=BestEffort, maxRequestLifetime=75000, retryDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=100, upper=100000}, reconnectDelay=ExponentialDelay{growBy 1.0 MILLISECONDS, powers of 2; lower=32, upper=4096}, observeIntervalDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=10, upper=100000}, keepAliveInterval=30000, autoreleaseAfter=2000, bufferPoolingEnabled=true, tcpNodelayEnabled=true, mutationTokensEnabled=false, socketConnectTimeout=1000, dcpConnectionBufferSize=20971520, dcpConnectionBufferAckThreshold=0.2, dcpConnectionName=dcp/core-io, callbacksOnIoPool=false, queryTimeout=7500, viewTimeout=7500, kvTimeout=2500, connectTimeout=5000, disconnectTimeout=25000, dnsSrvEnabled=false} 2016-11-02 18:48:57.245 INFO 7 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-11-02 18:48:57.291 INFO 7 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-11-02 18:48:57.533 INFO 7 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-11-02 18:48:57.638 INFO 7 --- [-computations-4] c.c.c.core.config.ConfigurationProvider : Opened bucket books 2016-11-02 18:48:58.152 INFO 7 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Registering beans for JMX exposure on startup Book{isbn=978-1-4919-1889-0, name=Minecraft Modding with Forge, cost=29.99} 2016-11-02 18:48:58.402 INFO 7 --- [ main] org.example.webapp.Application : Started Application in 2.799 seconds (JVM running for 3.141) 2016-11-02 18:48:58.403 INFO 7 --- [ Thread-5] s.c.a.AnnotationConfigApplicationContext : Closing org.springframework.context.annotation.AnnotationConfigApplicationContext@108c4c35: startup date [Wed Nov 02 18:48:56 UTC 2016]; root of context hierarchy 2016-11-02 18:48:58.404 INFO 7 --- [ Thread-5] o.s.j.e.a.AnnotationMBeanExporter : Unregistering JMX-exposed beans on shutdown 2016-11-02 18:48:58.410 INFO 7 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-11-02 18:48:58.410 INFO 7 --- [ Thread-5] c.c.c.core.config.ConfigurationProvider : Closed bucket books |

El salpicadero actualizado tiene ahora el siguiente aspecto:

Eliminar el clúster Kubernetes

El clúster Kubernetes se puede eliminar como:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 |

kops-darwin-amd64 delete cluster --name=kubernetes.arungupta.me --state=s3://kops-couchbase --yes TYPE NAME ID autoscaling-config master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config nodes.kubernetes.arungupta.me-20161101235639 nodes.kubernetes.arungupta.me-20161101235639 autoscaling-group master-us-west-2a.masters.kubernetes.arungupta.me master-us-west-2a.masters.kubernetes.arungupta.me autoscaling-group master-us-west-2b.masters.kubernetes.arungupta.me master-us-west-2b.masters.kubernetes.arungupta.me autoscaling-group master-us-west-2c.masters.kubernetes.arungupta.me master-us-west-2c.masters.kubernetes.arungupta.me autoscaling-group nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me dhcp-options kubernetes.arungupta.me dopt-9b7b08ff iam-instance-profile masters.kubernetes.arungupta.me masters.kubernetes.arungupta.me iam-instance-profile nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me iam-role masters.kubernetes.arungupta.me masters.kubernetes.arungupta.me iam-role nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me instance master-us-west-2a.masters.kubernetes.arungupta.me i-8798eb9f instance master-us-west-2b.masters.kubernetes.arungupta.me i-eca96ab3 instance master-us-west-2c.masters.kubernetes.arungupta.me i-63fd3dbf instance nodes.kubernetes.arungupta.me i-21a96a7e instance nodes.kubernetes.arungupta.me i-57fb3b8b instance nodes.kubernetes.arungupta.me i-5c99ea44 internet-gateway kubernetes.arungupta.me igw-b624abd2 keypair kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 route-table kubernetes.arungupta.me rtb-e44df183 route53-record api.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/api.internal.kubernetes.arungupta.me. route53-record api.kubernetes.arungupta.me. Z6I41VJM5VCZV/api.kubernetes.arungupta.me. route53-record etcd-events-us-west-2a.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2a.internal.kubernetes.arungupta.me. route53-record etcd-events-us-west-2b.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2b.internal.kubernetes.arungupta.me. route53-record etcd-events-us-west-2c.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2c.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2a.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2a.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2b.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2b.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2c.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2c.internal.kubernetes.arungupta.me. security-group masters.kubernetes.arungupta.me sg-3e790f47 security-group nodes.kubernetes.arungupta.me sg-3f790f46 subnet us-west-2a.kubernetes.arungupta.me subnet-3cdbc958 subnet us-west-2b.kubernetes.arungupta.me subnet-18c3f76e subnet us-west-2c.kubernetes.arungupta.me subnet-b30f6deb volume us-west-2a.etcd-events.kubernetes.arungupta.me vol-202350a8 volume us-west-2a.etcd-main.kubernetes.arungupta.me vol-0a235082 volume us-west-2b.etcd-events.kubernetes.arungupta.me vol-401f5bf4 volume us-west-2b.etcd-main.kubernetes.arungupta.me vol-691f5bdd volume us-west-2c.etcd-events.kubernetes.arungupta.me vol-aefe163b volume us-west-2c.etcd-main.kubernetes.arungupta.me vol-e9fd157c vpc kubernetes.arungupta.me vpc-e5f50382 internet-gateway:igw-b624abd2 still has dependencies, will retry keypair:kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 ok instance:i-5c99ea44 ok instance:i-63fd3dbf ok instance:i-eca96ab3 ok instance:i-21a96a7e ok autoscaling-group:master-us-west-2a.masters.kubernetes.arungupta.me ok autoscaling-group:master-us-west-2b.masters.kubernetes.arungupta.me ok autoscaling-group:master-us-west-2c.masters.kubernetes.arungupta.me ok autoscaling-group:nodes.kubernetes.arungupta.me ok iam-instance-profile:nodes.kubernetes.arungupta.me ok iam-instance-profile:masters.kubernetes.arungupta.me ok instance:i-57fb3b8b ok instance:i-8798eb9f ok route53-record:Z6I41VJM5VCZV/etcd-events-us-west-2a.internal.kubernetes.arungupta.me. ok iam-role:nodes.kubernetes.arungupta.me ok iam-role:masters.kubernetes.arungupta.me ok autoscaling-config:nodes.kubernetes.arungupta.me-20161101235639 ok autoscaling-config:master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 ok subnet:subnet-b30f6deb still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry autoscaling-config:master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 ok autoscaling-config:master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 ok volume:vol-0a235082 still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 internet-gateway:igw-b624abd2 still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry subnet:subnet-b30f6deb still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-18c3f76e still has dependencies, will retry subnet:subnet-b30f6deb still has dependencies, will retry internet-gateway:igw-b624abd2 still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 subnet:subnet-b30f6deb still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry internet-gateway:igw-b624abd2 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd volume:vol-401f5bf4 volume:vol-202350a8 subnet:subnet-3cdbc958 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 subnet:subnet-18c3f76e ok volume:vol-e9fd157c ok volume:vol-401f5bf4 ok volume:vol-0a235082 ok volume:vol-691f5bdd ok subnet:subnet-3cdbc958 ok volume:vol-aefe163b ok subnet:subnet-b30f6deb ok internet-gateway:igw-b624abd2 ok volume:vol-202350a8 ok security-group:sg-3f790f46 ok security-group:sg-3e790f47 ok route-table:rtb-e44df183 ok vpc:vpc-e5f50382 ok dhcp-options:dopt-9b7b08ff ok Cluster deleted |

couchbase.com/contenedores proporcionan más detalles sobre cómo ejecutar Couchbase en diferentes marcos de contenedores. Más información sobre Couchbase: