Fuel AI-driven development with Capella’s latest updates: real-time analytics, vector search at the edge, and a free tier to start quickly.

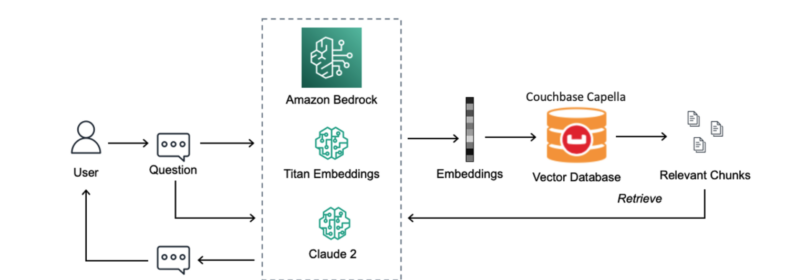

Enhance generative AI with Retrieval-Augmented Generation using Couchbase Capella and Amazon Bedrock for scalable, accurate results.

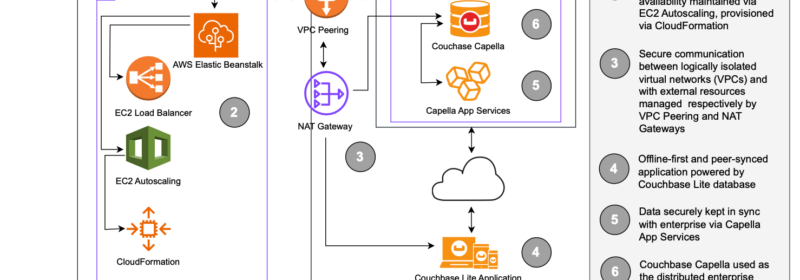

In this blog, we explore a customer implemention of an innovative offline-first remote access solution and detail their architecture and data flow with AWS.

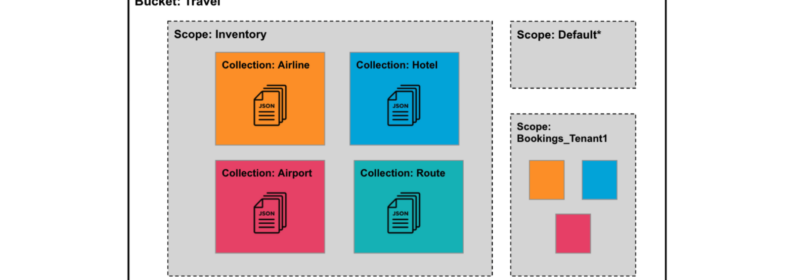

Discover the power of Scopes and Collections in Couchbase for secure, scalable mobile and edge deployments with Capella App Services.

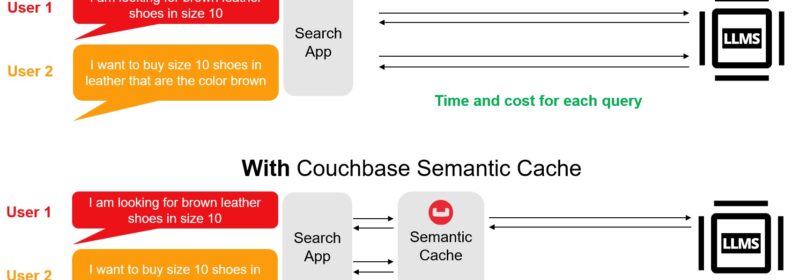

The LangChain-Couchbase package integrates Couchbase's vector search, semantic cache, conversational cache for generative AI workflows.

Edge AI utilizes AI applications on edge devices to enable real-time data processing locally. Learn all about edge AI and the role databases play here.

To create personal, ultra-responsive experiences that users expect, your data architecture must be able to adapt dynamically to their preferences.

Vector search and full-text search are both methods used for searching through collections of data, but they operate in different ways and are suited to different types of data and use cases.

Discover essential frameworks and technologies for ethical data stewardship in software development, ensuring privacy and integrity.

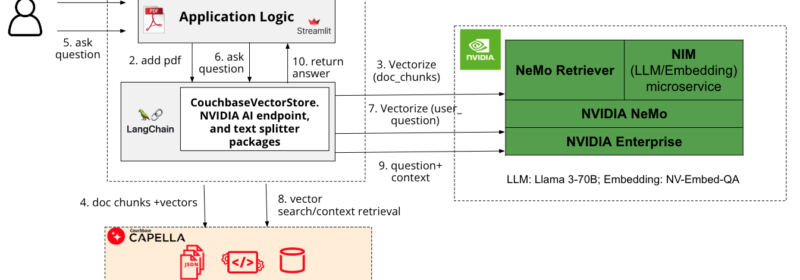

Develop an interactive GenAI application with grounded and relevant responses using Couchbase Capella-based RAG and accelerate it using NVIDIA NIM/NeMo

Numerous Couchbase customers, across a wide range of industries, are planning to utilize AI in their businesses to create a better experience for their customers.

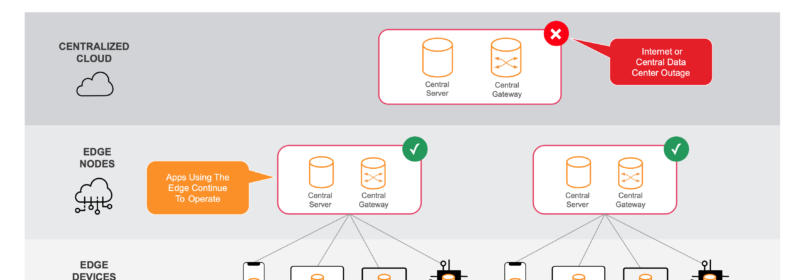

This article focuses on the Centralized vs. Edge Compute paradigm, exploring why a cloud to edge database with vector capability will best address challenges on data privacy, performance, and cost-effectiveness