MongoDB 3.0 + WiredTiger Fail to Close Performance Gap; Couchbase Demonstrates 4.5X Performance Advantage in Recent Benchmark

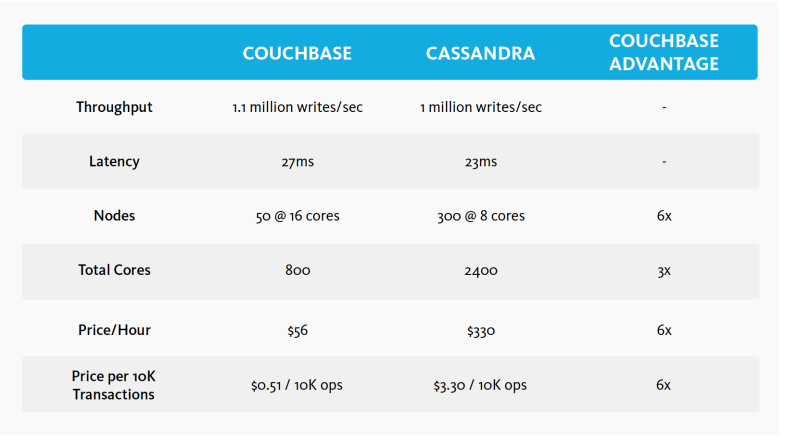

Couchbase continues to outperform Cassandra and MongoDB in high-scale use cases. In a new benchmark, Couchbase Server 3.0 sustained 1.1 million writes per second with very low latency on Google Cloud Platform, using just 50 nodes, while Cassandra required 300 nodes. The results show that to sustain 1 million writes per second on Couchbase would cost 83 percent less than what it does on Cassandra – $56 per hour for Couchbase compared with $330 per hour for Cassandra.

“Recent benchmarks are proof positive that Couchbase Server is uniquely suited to deliver enterprise grade performance and scalability on the cloud platform of choice,” said Ravi Mayuram, senior vice president, Products and Engineering, Couchbase.

Results of the Google Cloud benchmark are summarized in the following table:

Couchbase performs 4.5X better than MongoDB 3.0 + WiredTiger

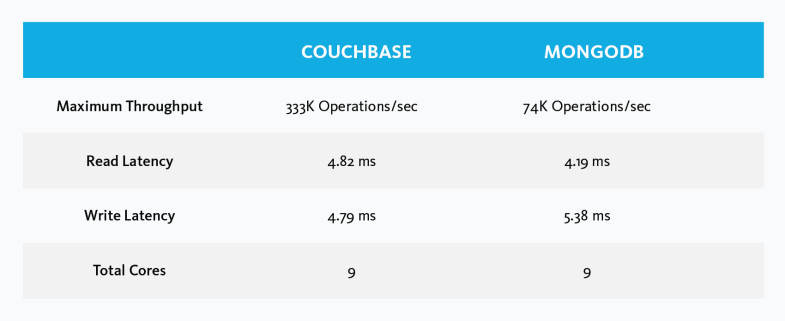

In a separate recent benchmark, Couchbase Server 3.0 demonstrated a 4.5X performance advantage over MongoDB 3.0 + WiredTiger. WiredTiger is the high-performance storage engine that MongoDB acquired earlier this year and recently made available as an option, claiming it significantly increases performance over the default engine. Even with WiredTiger, MongoDB 3.0 delivered less than one-fourth the throughput rate of Couchbase Server 3.0 using the same resources.

Results of the Couchbase vs. MongoDB + WiredTiger benchmark are summarized in the following table:

Fair, transparent benchmarks are good for the industry

A number of NoSQL database vendors have engaged in commissioning and publishing benchmarks, which can provide value for potential buyers of their products. If done in an open and transparent way, benchmarks are a valuable tool for demonstrating that a specific NoSQL database can support a specific use case under a specific set of conditions.

To be fair and transparent, benchmarks need to follow a few simple rules – which Couchbase adheres to in all commissioned benchmarks:

1. Use the most recent versions of each solution’s generally available software;

2. Clearly communicate the use cases and workloads being measured so that developers and operations engineers can assess whether the benchmark applies to their situation, and;

3. Keep the tests open, so they can be reproduced and the results verified.

“We see continued value in conducting transparent, repeatable benchmarks. They make it easier for the market to see which NoSQL databases perform well on targeted use cases,” said Bob Wiederhold, CEO at Couchbase. “More and more enterprises are deploying NoSQL for mission-critical applications operating at significant scale. The use of benchmarks is increasing during this phase, because performance at scale is critical for most of these applications. Developers and ops engineers need to know which products perform best for their specific use cases and workloads.”

Resources:

About Couchbase

At Couchbase, we believe data is at the heart of the enterprise. We empower developers and architects to build, deploy, and run their most mission-critical applications. Couchbase delivers a high-performance, flexible, and scalable modern database that runs across the data center and any cloud. Many of the world’s largest enterprises rely on Couchbase to power the core applications their businesses depend on. For more information, visit www.couchbase.com.

Media Contact

James Kim

couchbasePR@couchbase.com

Couchbase Communications