스프링 부트와 카우치베이스를 사용하여 Kubernetes 1.4 시작하기 에서 아마존 웹 서비스에서 Kubernetes 1.4를 시작하는 방법을 설명합니다. A

Couchbase 서비스가 클러스터에 생성되고 Spring Boot 애플리케이션이 데이터베이스에 JSON 문서를 저장합니다. 여기에는 kube-up.sh 스크립트를 쿠버네티스 바이너리에서 다운로드하십시오. github.com/kubernetes/kubernetes/releases/download/v1.4.0/kubernetes.tar.gz 를 사용하여 클러스터를 시작합니다. 이 스크립트는 단일 마스터로만 Kubernetes 클러스터를 생성할 수 있습니다. 이는 마스터가 단일 장애 지점이 되는 분산 애플리케이션의 근본적인 결함입니다.

만나기 kops - 쿠버네티스 오퍼레이션의 줄임말입니다.

이것은 가용성이 높은 Kubernetes 클러스터를 시작하고 실행하는 가장 쉬운 방법입니다. 쿠버네티스의 kubectl 스크립트는 실행 중인 클러스터에 대해 명령을 실행하기 위한 CLI입니다. 다음과 같이 생각하세요. kops as 클러스터용 kubectl.

이 블로그에서는 다음을 사용하여 Amazon에서 고가용성 Kubernetes 클러스터를 생성하는 방법을 보여드립니다. kops. 그리고 클러스터가 생성되면 클러스터에 Couchbase 서비스를 생성하고 Spring Boot 애플리케이션을 실행하여 JSON 문서를 저장합니다.

를 데이터베이스에 저장합니다.

많은 분들께 감사드립니다. justinsb, sarahz, razic, 제이고렐, 어깨를 으쓱, bkpandey 그리고 다른 사람들 Kubernetes 슬랙 채널 세부 사항을 도와주셔서 감사합니다!

kops 및 kubectl 다운로드

- 다운로드 Kops 최신 릴리스. 이 블로그는 다음을 사용하여 테스트되었습니다. 1.4.1 에 대한 전체 명령 집합을 제공합니다.

kops를 볼 수 있습니다:

1234567891011121314151617181920212223242526272829303132333435kops-darwin-amd64 --helpkops is kubernetes ops.It allows you to create, destroy, upgrade and maintain clusters.Usage:kops [command]Available Commands:create create resourcesdelete delete clustersdescribe describe objectsedit edit itemsexport export clusters/kubecfgget list or get objectsimport import clustersrolling-update rolling update clusterssecrets Manage secrets & keystoolbox Misc infrequently used commandsupdate update clustersupgrade upgrade clustersversion Print the client version informationFlags:--alsologtostderr log to standard error as well as files--config string config file (default is $HOME/.kops.yaml)--log_backtrace_at traceLocation when logging hits line file:N, emit a stack trace (default :0)--log_dir string If non-empty, write log files in this directory--logtostderr log to standard error instead of files (default false)--name string Name of cluster--state string Location of state storage--stderrthreshold severity logs at or above this threshold go to stderr (default 2)-v, --v Level log level for V logs--vmodule moduleSpec comma-separated list of pattern=N settings for file-filtered loggingUse "kops [command] --help" for more information about a command. - 다운로드

kubectl:

1curl -Lo kubectl https://storage.googleapis.com/kubernetes-release/release/v1.4.1/bin/darwin/amd64/kubectl && chmod +x kubectl - 포함

kubectl의PATH.

아마존에서 버킷 및 NS 레코드 생성

현재로서는 약간의 설정이 필요하며, 다음 릴리스에서 이 문제가 해결되기를 바랍니다. AWS에서 클러스터 불러오기 자세한 단계와 더 많은 배경 지식을 제공합니다.

블로그의 내용은 다음과 같습니다:

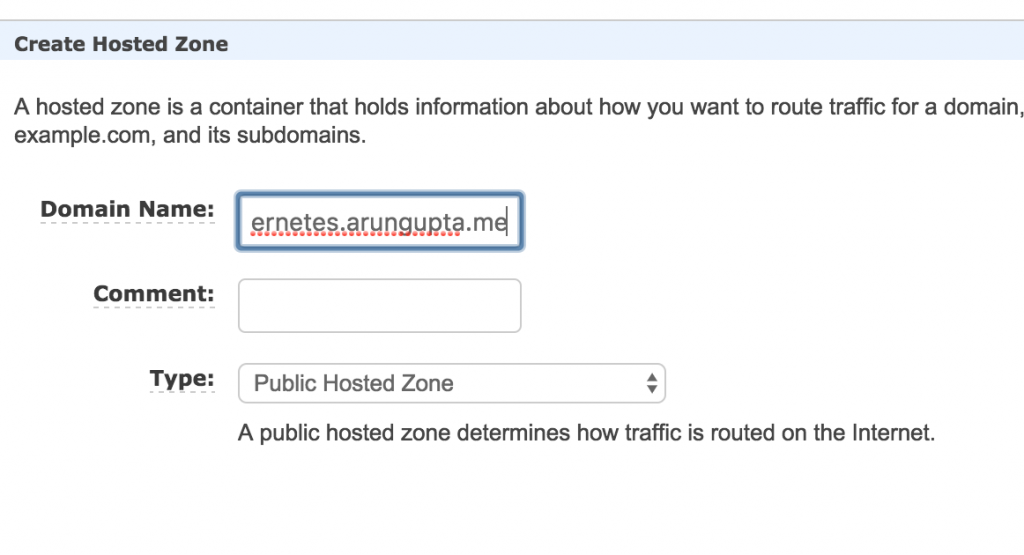

- 쿠버네티스 클러스터를 호스팅할 도메인을 선택합니다. 이 블로그는

kubernetes.arungupta.me도메인을 선택합니다. 최상위 도메인 또는 하위 도메인을 선택할 수 있습니다. - 아마존 루트 53 는 가용성과 확장성이 뛰어난 DNS 서비스입니다. 로그인 Amazon 콘솔 를 생성하고 호스트 영역 라우트 53 서비스를 사용하는 이 도메인에 대해

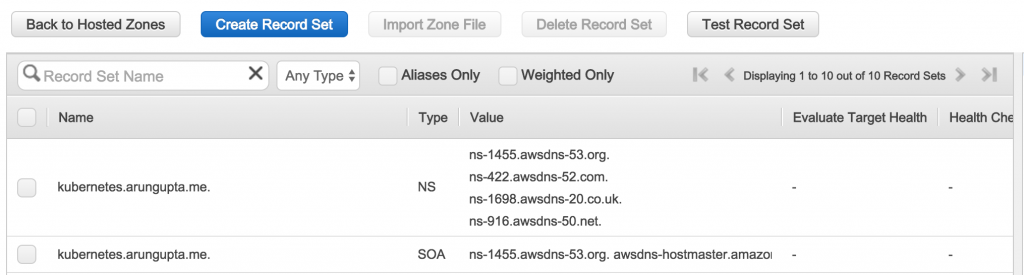

생성된 영역은 다음과 같습니다:

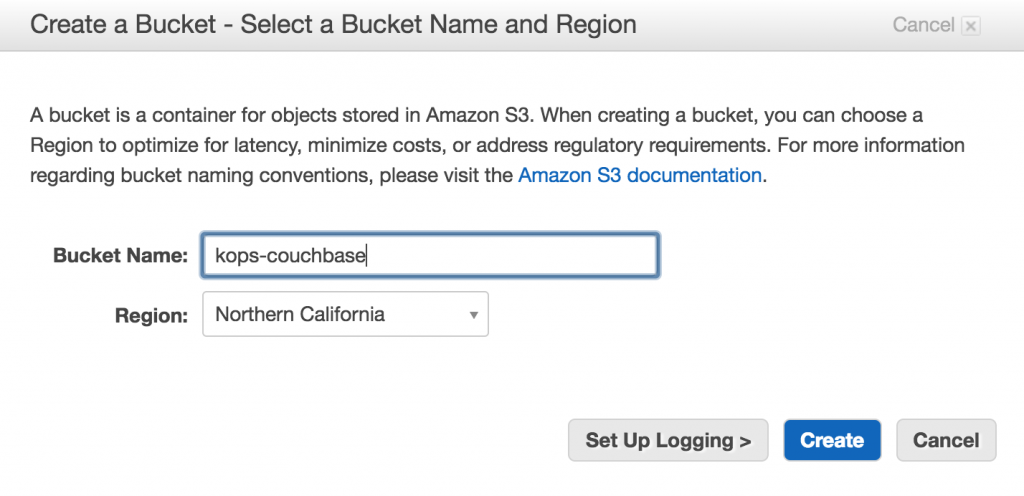

에 표시된 값은가치열은 나중에 NS 레코드를 만드는 데 사용되므로 중요합니다. - 다음을 사용하여 S3 버킷을 만듭니다. Amazon 콘솔 를 사용하여 클러스터 구성을 저장합니다.

상태 저장소.

- 도메인

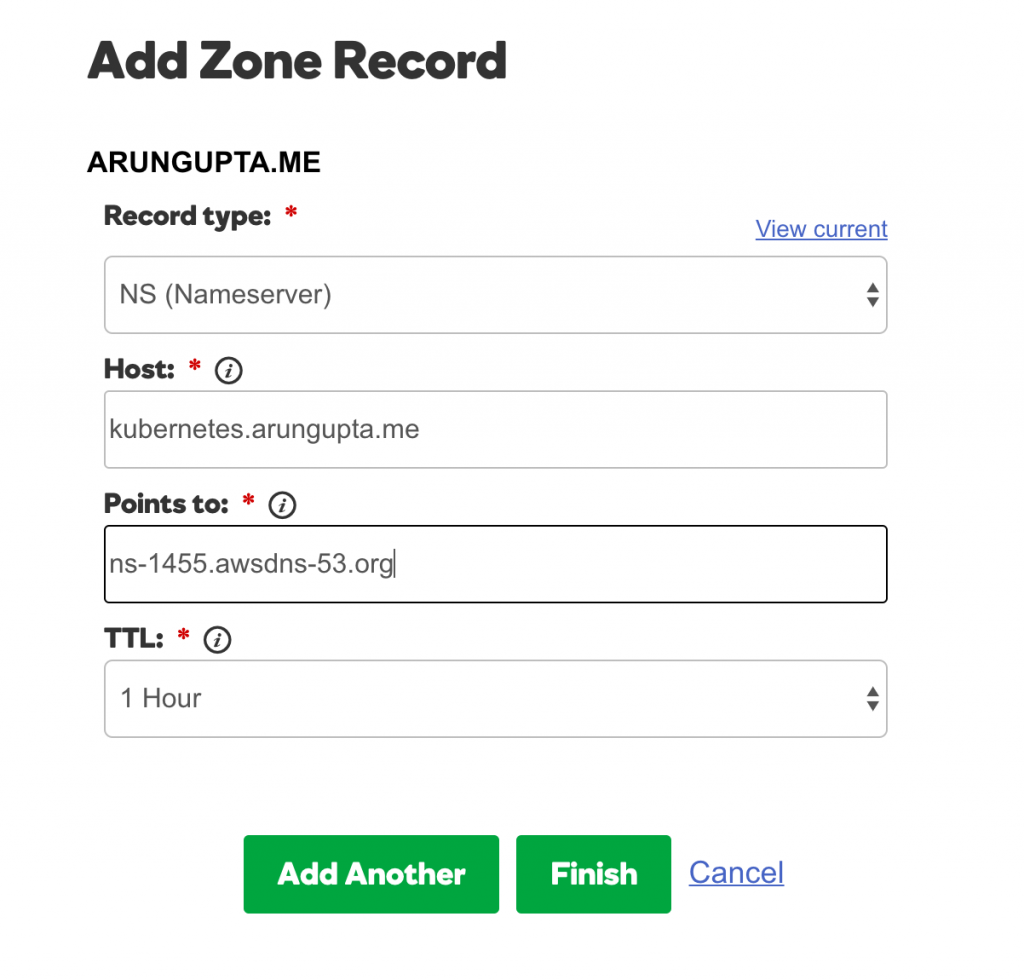

kubernetes.arungupta.me은(는) GoDaddy에서 호스팅됩니다. Route53 호스트 영역의 값 열에 표시된 각 값에 대해 다음을 사용하여 NS 레코드를 만듭니다. GoDaddy 도메인 제어 센터 에 대한

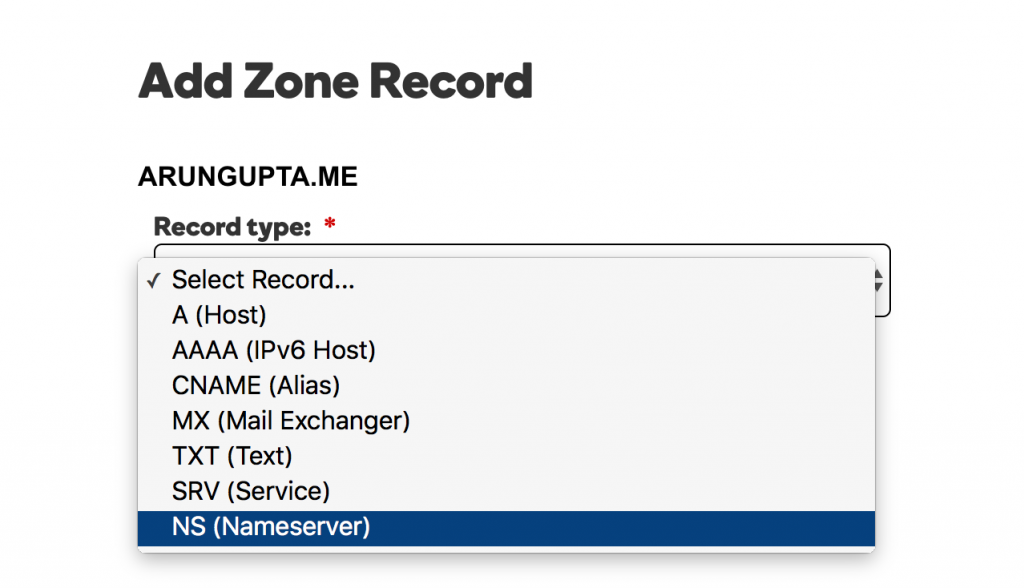

이 도메인.레코드 유형을 선택합니다:

각 값에 대해 그림과 같이 레코드를 추가합니다:

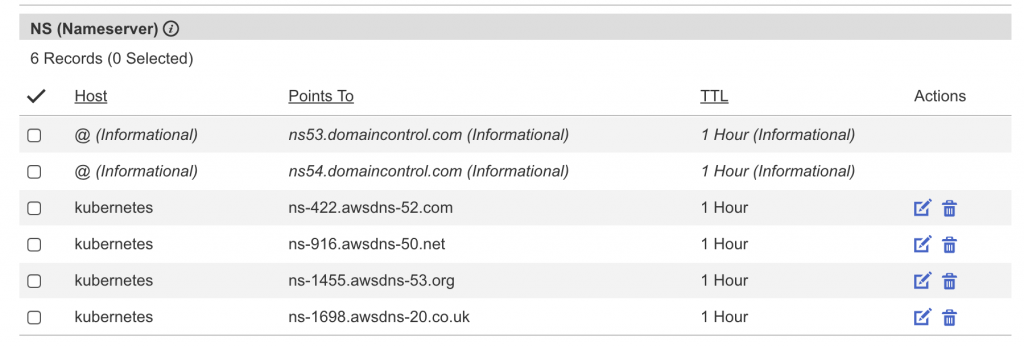

완성된 레코드 세트는 다음과 같습니다:

쿠버네티스 멀티마스터 클러스터 시작하기

아마존 지역 및 지역에 대해 조금 더 이해해 보겠습니다:

Amazon EC2는 전 세계 여러 위치에서 호스팅됩니다. 이러한 위치는 리전 및 가용 영역으로 구성됩니다. 각 리전은 별도의 지리적 영역입니다. 각 리전에는 가용 영역이라고 하는 여러 개의 격리된 위치가 있습니다.

가용성이 높은 Kubernetes 클러스터는 여러 영역에 걸쳐 생성할 수 있지만 지역 간에는 생성할 수 없습니다.

- 지역 내 사용 가능 지역을 확인하세요:

1234567891011121314151617181920212223aws ec2 describe-availability-zones --region us-west-2{"AvailabilityZones": [{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2a"},{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2b"},{"State": "available","RegionName": "us-west-2","Messages": [],"ZoneName": "us-west-2c"}]} - 멀티 마스터 클러스터를 만듭니다:

1kops-darwin-amd64 create cluster --name=kubernetes.arungupta.me --cloud=aws --zones=us-west-2a,us-west-2b,us-west-2c --master-size=m4.large --node-count=3 --node-size=m4.2xlarge --master-zones=us-west-2a,us-west-2b,us-west-2c --state=s3://kops-couchbase --yes

대부분의 스위치는 설명이 필요 없습니다. 일부 스위치는 약간의 설명이 필요합니다:- 다음을 사용하여 여러 영역 지정

--마스터 영역(홀수여야 함) AZ에 여러 마스터를 생성합니다. --cloud=aws는 영역에서 클라우드를 추론할 수 있는 경우 선택 사항입니다.--예는 클러스터를 즉시 생성하도록 지정하는 데 사용됩니다. 그렇지 않으면 상태만 버킷에 저장되고 클러스터는 별도로 만들어야 합니다.

전체 CLI 스위치 세트를 볼 수 있습니다:

12345678910111213141516171819202122232425262728293031323334353637383940./kops-darwin-amd64 create cluster --helpCreates a k8s cluster.Usage:kops create cluster [flags]Flags:--admin-access string Restrict access to admin endpoints (SSH, HTTPS) to this CIDR. If not set, access will not be restricted by IP.--associate-public-ip Specify --associate-public-ip=[true|false] to enable/disable association of public IP for master ASG and nodes. Default is 'true'. (default true)--channel string Channel for default versions and configuration to use (default "stable")--cloud string Cloud provider to use - gce, aws--dns-zone string DNS hosted zone to use (defaults to last two components of cluster name)--image string Image to use--kubernetes-version string Version of kubernetes to run (defaults to version in channel)--master-size string Set instance size for masters--master-zones string Zones in which to run masters (must be an odd number)--model string Models to apply (separate multiple models with commas) (default "config,proto,cloudup")--network-cidr string Set to override the default network CIDR--networking string Networking mode to use. kubenet (default), classic, external. (default "kubenet")--node-count int Set the number of nodes--node-size string Set instance size for nodes--out string Path to write any local output--project string Project to use (must be set on GCE)--ssh-public-key string SSH public key to use (default "~/.ssh/id_rsa.pub")--target string Target - direct, terraform (default "direct")--vpc string Set to use a shared VPC--yes Specify --yes to immediately create the cluster--zones string Zones in which to run the clusterGlobal Flags:--alsologtostderr log to standard error as well as files--config string config file (default is $HOME/.kops.yaml)--log_backtrace_at traceLocation when logging hits line file:N, emit a stack trace (default :0)--log_dir string If non-empty, write log files in this directory--logtostderr log to standard error instead of files (default false)--name string Name of cluster--state string Location of state storage--stderrthreshold severity logs at or above this threshold go to stderr (default 2)-v, --v Level log level for V logs--vmodule moduleSpec comma-separated list of pattern=N settings for file-filtered logging - 다음을 사용하여 여러 영역 지정

- 클러스터가 생성되면 클러스터에 대한 자세한 내용을 확인합니다:

12345kubectl cluster-infoKubernetes master is running at https://api.kubernetes.arungupta.meKubeDNS is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kube-dnsTo further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

- 클러스터 클라이언트 및 서버 버전을 확인합니다:

123kubectl versionClient Version: version.Info{Major:"1", Minor:"4", GitVersion:"v1.4.1", GitCommit:"33cf7b9acbb2cb7c9c72a10d6636321fb180b159", GitTreeState:"clean", BuildDate:"2016-10-10T18:19:49Z", GoVersion:"go1.7.1", Compiler:"gc", Platform:"darwin/amd64"}Server Version: version.Info{Major:"1", Minor:"4", GitVersion:"v1.4.3", GitCommit:"4957b090e9a4f6a68b4a40375408fdc74a212260", GitTreeState:"clean", BuildDate:"2016-10-16T06:20:04Z", GoVersion:"go1.6.3", Compiler:"gc", Platform:"linux/amd64"}

- 클러스터의 모든 노드를 확인합니다:

12345678kubectl get nodesNAME STATUS AGEip-172-20-111-151.us-west-2.compute.internal Ready 1hip-172-20-116-40.us-west-2.compute.internal Ready 1hip-172-20-48-41.us-west-2.compute.internal Ready 1hip-172-20-49-105.us-west-2.compute.internal Ready 1hip-172-20-80-233.us-west-2.compute.internal Ready 1hip-172-20-82-93.us-west-2.compute.internal Ready 1h

또는 마스터 노드만 찾아보세요:

12345kubectl get nodes -l kubernetes.io/role=masterNAME STATUS AGEip-172-20-111-151.us-west-2.compute.internal Ready 1hip-172-20-48-41.us-west-2.compute.internal Ready 1hip-172-20-82-93.us-west-2.compute.internal Ready 1h - 모든 클러스터를 확인합니다:

123kops-darwin-amd64 get clusters --state=s3://kops-couchbaseNAME CLOUD ZONESkubernetes.arungupta.me aws us-west-2a,us-west-2b,us-west-2c

Kubernetes 대시보드 애드온

기본적으로 kops를 사용하여 만든 클러스터에는 UI 대시보드가 없습니다. 하지만 애드온으로 추가할 수 있습니다:

|

1 2 3 |

kubectl create -f https://raw.githubusercontent.com/kubernetes/kops/master/addons/kubernetes-dashboard/v1.4.0.yaml deployment "kubernetes-dashboard-v1.4.0" created service "kubernetes-dashboard" created |

이제 클러스터에 대한 전체 세부 정보를 볼 수 있습니다:

|

1 2 3 4 5 6 |

kubectl cluster-info Kubernetes master is running at https://api.kubernetes.arungupta.me KubeDNS is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kube-dns kubernetes-dashboard is running at https://api.kubernetes.arungupta.me/api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. |

그리고 Kubernetes UI 대시보드는 표시된 URL에 있습니다. 우리의 경우, 이것은 https://api.kubernetes.arungupta.me/ui 처럼 보입니다:

이 대시보드에 액세스하기 위한 자격 증명은 다음을 사용하여 얻을 수 있습니다. kubectl 구성 보기 명령을 실행합니다. 값은 다음과 같이 표시됩니다:

|

1 2 3 4 |

- name: kubernetes.arungupta.me-basic-auth user: password: PASSWORD username: admin |

카우치베이스 서비스 배포

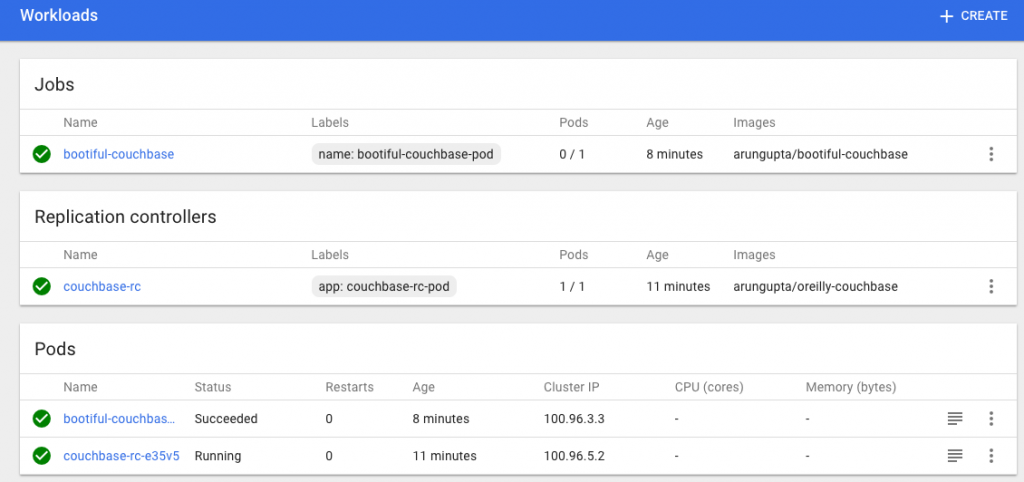

에 설명된 대로 스프링 부트와 카우치베이스를 사용하여 Kubernetes 1.4 시작하기를 사용하여 Couchbase 서비스를 실행해 보겠습니다:

|

1 2 3 |

kubectl create -f ~/workspaces/kubernetes-java-sample/maven/couchbase-service.yml service "couchbase-service" created replicationcontroller "couchbase-rc" created |

이 구성 파일은 다음 위치에 있습니다. github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/couchbase-service.yml. 서비스 목록을 확인하세요:

|

1 2 3 4 |

kubectl get svc NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE couchbase-service 100.65.4.139 8091/TCP,8092/TCP,8093/TCP,11210/TCP 27s kubernetes 100.64.0.1 443/TCP 2h |

서비스에 대해 설명하세요:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

kubectl describe svc/couchbase-service Name: couchbase-service Namespace: default Labels: Selector: app=couchbase-rc-pod Type: ClusterIP IP: 100.65.4.139 Port: admin 8091/TCP Endpoints: 100.96.5.2:8091 Port: views 8092/TCP Endpoints: 100.96.5.2:8092 Port: query 8093/TCP Endpoints: 100.96.5.2:8093 Port: memcached 11210/TCP Endpoints: 100.96.5.2:11210 Session Affinity: None |

포드 받기:

|

1 2 3 |

kubectl get pods NAME READY STATUS RESTARTS AGE couchbase-rc-e35v5 1/1 Running 0 1m |

스프링 부트 애플리케이션 실행

Spring Boot 애플리케이션은 Couchbase 서비스에 대해 실행되며 그 안에 JSON 문서를 저장합니다. Spring Boot 애플리케이션을 시작합니다:

|

1 2 |

kubectl create -f ~/workspaces/kubernetes-java-sample/maven/bootiful-couchbase.yml job "bootiful-couchbase" created |

이 구성 파일은 다음 위치에 있습니다. github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/bootiful-couchbase.yml. 모든 포드 목록을 참조하세요:

|

1 2 3 4 |

kubectl get pods --show-all NAME READY STATUS RESTARTS AGE bootiful-couchbase-ainv8 0/1 Completed 0 1m couchbase-rc-e35v5 1/1 Running 0 3m |

전체 파드의 로그를 확인합니다:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

kubectl logs bootiful-couchbase-ainv8 . ____ _ __ _ _ /\ / ___'_ __ _ _(_)_ __ __ _ ( ( )___ | '_ | '_| | '_ / _` | \/ ___)| |_)| | | | | || (_| | ) ) ) ) ' |____| .__|_| |_|_| |___, | / / / / =========|_|==============|___/=/_/_/_/ :: Spring Boot :: (v1.4.0.RELEASE) 2016-11-02 18:48:56.035 INFO 7 --- [ main] org.example.webapp.Application : Starting Application v1.0-SNAPSHOT on bootiful-couchbase-ainv8 with PID 7 (/maven/bootiful-couchbase.jar started by root in /) 2016-11-02 18:48:56.040 INFO 7 --- [ main] org.example.webapp.Application : No active profile set, falling back to default profiles: default 2016-11-02 18:48:56.115 INFO 7 --- [ main] s.c.a.AnnotationConfigApplicationContext : Refreshing org.springframework.context.annotation.AnnotationConfigApplicationContext@108c4c35: startup date [Wed Nov 02 18:48:56 UTC 2016]; root of context hierarchy 2016-11-02 18:48:57.021 INFO 7 --- [ main] com.couchbase.client.core.CouchbaseCore : CouchbaseEnvironment: {sslEnabled=false, sslKeystoreFile='null', sslKeystorePassword='null', queryEnabled=false, queryPort=8093, bootstrapHttpEnabled=true, bootstrapCarrierEnabled=true, bootstrapHttpDirectPort=8091, bootstrapHttpSslPort=18091, bootstrapCarrierDirectPort=11210, bootstrapCarrierSslPort=11207, ioPoolSize=8, computationPoolSize=8, responseBufferSize=16384, requestBufferSize=16384, kvServiceEndpoints=1, viewServiceEndpoints=1, queryServiceEndpoints=1, searchServiceEndpoints=1, ioPool=NioEventLoopGroup, coreScheduler=CoreScheduler, eventBus=DefaultEventBus, packageNameAndVersion=couchbase-java-client/2.2.8 (git: 2.2.8, core: 1.2.9), dcpEnabled=false, retryStrategy=BestEffort, maxRequestLifetime=75000, retryDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=100, upper=100000}, reconnectDelay=ExponentialDelay{growBy 1.0 MILLISECONDS, powers of 2; lower=32, upper=4096}, observeIntervalDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=10, upper=100000}, keepAliveInterval=30000, autoreleaseAfter=2000, bufferPoolingEnabled=true, tcpNodelayEnabled=true, mutationTokensEnabled=false, socketConnectTimeout=1000, dcpConnectionBufferSize=20971520, dcpConnectionBufferAckThreshold=0.2, dcpConnectionName=dcp/core-io, callbacksOnIoPool=false, queryTimeout=7500, viewTimeout=7500, kvTimeout=2500, connectTimeout=5000, disconnectTimeout=25000, dnsSrvEnabled=false} 2016-11-02 18:48:57.245 INFO 7 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-11-02 18:48:57.291 INFO 7 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-11-02 18:48:57.533 INFO 7 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-11-02 18:48:57.638 INFO 7 --- [-computations-4] c.c.c.core.config.ConfigurationProvider : Opened bucket books 2016-11-02 18:48:58.152 INFO 7 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Registering beans for JMX exposure on startup Book{isbn=978-1-4919-1889-0, name=Minecraft Modding with Forge, cost=29.99} 2016-11-02 18:48:58.402 INFO 7 --- [ main] org.example.webapp.Application : Started Application in 2.799 seconds (JVM running for 3.141) 2016-11-02 18:48:58.403 INFO 7 --- [ Thread-5] s.c.a.AnnotationConfigApplicationContext : Closing org.springframework.context.annotation.AnnotationConfigApplicationContext@108c4c35: startup date [Wed Nov 02 18:48:56 UTC 2016]; root of context hierarchy 2016-11-02 18:48:58.404 INFO 7 --- [ Thread-5] o.s.j.e.a.AnnotationMBeanExporter : Unregistering JMX-exposed beans on shutdown 2016-11-02 18:48:58.410 INFO 7 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-11-02 18:48:58.410 INFO 7 --- [ Thread-5] c.c.c.core.config.ConfigurationProvider : Closed bucket books |

쿠버네티스 클러스터 삭제

쿠버네티스 클러스터는 다음과 같이 삭제할 수 있습니다:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 |

kops-darwin-amd64 delete cluster --name=kubernetes.arungupta.me --state=s3://kops-couchbase --yes TYPE NAME ID autoscaling-config master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 autoscaling-config nodes.kubernetes.arungupta.me-20161101235639 nodes.kubernetes.arungupta.me-20161101235639 autoscaling-group master-us-west-2a.masters.kubernetes.arungupta.me master-us-west-2a.masters.kubernetes.arungupta.me autoscaling-group master-us-west-2b.masters.kubernetes.arungupta.me master-us-west-2b.masters.kubernetes.arungupta.me autoscaling-group master-us-west-2c.masters.kubernetes.arungupta.me master-us-west-2c.masters.kubernetes.arungupta.me autoscaling-group nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me dhcp-options kubernetes.arungupta.me dopt-9b7b08ff iam-instance-profile masters.kubernetes.arungupta.me masters.kubernetes.arungupta.me iam-instance-profile nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me iam-role masters.kubernetes.arungupta.me masters.kubernetes.arungupta.me iam-role nodes.kubernetes.arungupta.me nodes.kubernetes.arungupta.me instance master-us-west-2a.masters.kubernetes.arungupta.me i-8798eb9f instance master-us-west-2b.masters.kubernetes.arungupta.me i-eca96ab3 instance master-us-west-2c.masters.kubernetes.arungupta.me i-63fd3dbf instance nodes.kubernetes.arungupta.me i-21a96a7e instance nodes.kubernetes.arungupta.me i-57fb3b8b instance nodes.kubernetes.arungupta.me i-5c99ea44 internet-gateway kubernetes.arungupta.me igw-b624abd2 keypair kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 route-table kubernetes.arungupta.me rtb-e44df183 route53-record api.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/api.internal.kubernetes.arungupta.me. route53-record api.kubernetes.arungupta.me. Z6I41VJM5VCZV/api.kubernetes.arungupta.me. route53-record etcd-events-us-west-2a.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2a.internal.kubernetes.arungupta.me. route53-record etcd-events-us-west-2b.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2b.internal.kubernetes.arungupta.me. route53-record etcd-events-us-west-2c.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-events-us-west-2c.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2a.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2a.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2b.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2b.internal.kubernetes.arungupta.me. route53-record etcd-us-west-2c.internal.kubernetes.arungupta.me. Z6I41VJM5VCZV/etcd-us-west-2c.internal.kubernetes.arungupta.me. security-group masters.kubernetes.arungupta.me sg-3e790f47 security-group nodes.kubernetes.arungupta.me sg-3f790f46 subnet us-west-2a.kubernetes.arungupta.me subnet-3cdbc958 subnet us-west-2b.kubernetes.arungupta.me subnet-18c3f76e subnet us-west-2c.kubernetes.arungupta.me subnet-b30f6deb volume us-west-2a.etcd-events.kubernetes.arungupta.me vol-202350a8 volume us-west-2a.etcd-main.kubernetes.arungupta.me vol-0a235082 volume us-west-2b.etcd-events.kubernetes.arungupta.me vol-401f5bf4 volume us-west-2b.etcd-main.kubernetes.arungupta.me vol-691f5bdd volume us-west-2c.etcd-events.kubernetes.arungupta.me vol-aefe163b volume us-west-2c.etcd-main.kubernetes.arungupta.me vol-e9fd157c vpc kubernetes.arungupta.me vpc-e5f50382 internet-gateway:igw-b624abd2 still has dependencies, will retry keypair:kubernetes.kubernetes.arungupta.me-18:90:41:6f:5f:79:6a:a8:d5:b6:b8:3f:10:d5:d3:f3 ok instance:i-5c99ea44 ok instance:i-63fd3dbf ok instance:i-eca96ab3 ok instance:i-21a96a7e ok autoscaling-group:master-us-west-2a.masters.kubernetes.arungupta.me ok autoscaling-group:master-us-west-2b.masters.kubernetes.arungupta.me ok autoscaling-group:master-us-west-2c.masters.kubernetes.arungupta.me ok autoscaling-group:nodes.kubernetes.arungupta.me ok iam-instance-profile:nodes.kubernetes.arungupta.me ok iam-instance-profile:masters.kubernetes.arungupta.me ok instance:i-57fb3b8b ok instance:i-8798eb9f ok route53-record:Z6I41VJM5VCZV/etcd-events-us-west-2a.internal.kubernetes.arungupta.me. ok iam-role:nodes.kubernetes.arungupta.me ok iam-role:masters.kubernetes.arungupta.me ok autoscaling-config:nodes.kubernetes.arungupta.me-20161101235639 ok autoscaling-config:master-us-west-2b.masters.kubernetes.arungupta.me-20161101235639 ok subnet:subnet-b30f6deb still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry autoscaling-config:master-us-west-2a.masters.kubernetes.arungupta.me-20161101235639 ok autoscaling-config:master-us-west-2c.masters.kubernetes.arungupta.me-20161101235639 ok volume:vol-0a235082 still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 internet-gateway:igw-b624abd2 still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry subnet:subnet-b30f6deb still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-18c3f76e still has dependencies, will retry subnet:subnet-b30f6deb still has dependencies, will retry internet-gateway:igw-b624abd2 still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 volume:vol-401f5bf4 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd subnet:subnet-3cdbc958 volume:vol-202350a8 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 subnet:subnet-b30f6deb still has dependencies, will retry volume:vol-202350a8 still has dependencies, will retry internet-gateway:igw-b624abd2 still has dependencies, will retry subnet:subnet-18c3f76e still has dependencies, will retry volume:vol-e9fd157c still has dependencies, will retry volume:vol-aefe163b still has dependencies, will retry volume:vol-401f5bf4 still has dependencies, will retry volume:vol-691f5bdd still has dependencies, will retry security-group:sg-3e790f47 still has dependencies, will retry security-group:sg-3f790f46 still has dependencies, will retry subnet:subnet-3cdbc958 still has dependencies, will retry volume:vol-0a235082 still has dependencies, will retry Not all resources deleted; waiting before reattempting deletion internet-gateway:igw-b624abd2 security-group:sg-3f790f46 volume:vol-aefe163b route-table:rtb-e44df183 subnet:subnet-18c3f76e security-group:sg-3e790f47 volume:vol-691f5bdd volume:vol-401f5bf4 volume:vol-202350a8 subnet:subnet-3cdbc958 volume:vol-0a235082 dhcp-options:dopt-9b7b08ff subnet:subnet-b30f6deb volume:vol-e9fd157c vpc:vpc-e5f50382 subnet:subnet-18c3f76e ok volume:vol-e9fd157c ok volume:vol-401f5bf4 ok volume:vol-0a235082 ok volume:vol-691f5bdd ok subnet:subnet-3cdbc958 ok volume:vol-aefe163b ok subnet:subnet-b30f6deb ok internet-gateway:igw-b624abd2 ok volume:vol-202350a8 ok security-group:sg-3f790f46 ok security-group:sg-3e790f47 ok route-table:rtb-e44df183 ok vpc:vpc-e5f50382 ok dhcp-options:dopt-9b7b08ff ok Cluster deleted |

couchbase.com/containers 에서 다양한 컨테이너 프레임워크에서 Couchbase를 실행하는 방법에 대한 자세한 내용을 확인할 수 있습니다. Couchbase에 대한 자세한 정보: