A principios de esta semana se publicó Kubernetes 1.4. Lea la anuncio del blog y CHANGELOG.

Hay bastantes novedades en esta versión, pero las principales que me entusiasman son:

- Instalar Kubernetes con

kubeadmcomando. Esto se suma al mecanismo habitual de descarga desde https://github.com/kubernetes/kubernetes/releases. La dirección

kubeadm initykubeadm unirsees muy similar adocker swarm initydocker swarm joinpara Modo enjambre Docker. - Conjuntos de réplicas federadas

- ScheduledJob permite ejecutar trabajos por lotes a intervalos regulares.

- Limitación de pods a un nodo y afinidad y antiafinidad de las vainas

- Programación prioritaria de vainas

- Bonito aspecto Panel de control de Kubernetes (más información más adelante)

Este blog lo demostrará:

- Crear un clúster Kubernetes utilizando Amazon Web Services

- Crear un servicio Couchbase

- Ejecutar una aplicación Spring Boot que almacena un documento JSON en Couchbase.

Todos los archivos de descripción de recursos de este blog se encuentran en github.com/arun-gupta/kubernetes-java-sample/tree/master/maven.

Iniciar clúster Kubernetes

Descargar binario github.com/kubernetes/kubernetes/releases/download/v1.4.0/kubernetes.tar.gz y extracto Incluir kubernetes/cluster en PATH Start

un clúster Kubernetes de 2 nodos:

|

1 |

NUM_NODES=2 NODE_SIZE=m3.medium KUBERNETES_PROVIDER=aws kube-up.sh |

El registro se mostrará como:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 |

... Starting cluster in us-west-2a using provider aws ... calling verify-prereqs ... calling kube-up Starting cluster using os distro: jessie Uploading to Amazon S3 +++ Staging server tars to S3 Storage: kubernetes-staging-0eaf81fbc51209dd47c13b6d8b424149/devel upload: ../../../../../var/folders/81/ttv4n16x7p390cttrm_675y00000gn/T/kubernetes.XXXXXX.bCmvLbtK/s3/bootstrap-script to s3://kubernetes-staging-0eaf81fbc51209dd47c13b6d8b424149/devel/bootstrap-script Uploaded server tars: SERVER_BINARY_TAR_URL: https://s3.amazonaws.com/kubernetes-staging-0eaf81fbc51209dd47c13b6d8b424149/devel/kubernetes-server-linux-amd64.tar.gz SALT_TAR_URL: https://s3.amazonaws.com/kubernetes-staging-0eaf81fbc51209dd47c13b6d8b424149/devel/kubernetes-salt.tar.gz BOOTSTRAP_SCRIPT_URL: https://s3.amazonaws.com/kubernetes-staging-0eaf81fbc51209dd47c13b6d8b424149/devel/bootstrap-script INSTANCEPROFILE arn:aws:iam::598307997273:instance-profile/kubernetes-master 2016-07-29T15:13:35Z AIPAJF3XKLNKOXOTQOCT4 kubernetes-master / ROLES arn:aws:iam::598307997273:role/kubernetes-master 2016-07-29T15:13:33Z / AROAI3Q2KFBD5PCKRXCRM kubernetes-master ASSUMEROLEPOLICYDOCUMENT 2012-10-17 STATEMENT sts:AssumeRole Allow PRINCIPAL ec2.amazonaws.com INSTANCEPROFILE arn:aws:iam::598307997273:instance-profile/kubernetes-minion 2016-07-29T15:13:39Z AIPAIYSH5DJA4UPQIP4BE kubernetes-minion / ROLES arn:aws:iam::598307997273:role/kubernetes-minion 2016-07-29T15:13:37Z / AROAIQ57MPQYSHRPQCT2Q kubernetes-minion ASSUMEROLEPOLICYDOCUMENT 2012-10-17 STATEMENT sts:AssumeRole Allow PRINCIPAL ec2.amazonaws.com Using SSH key with (AWS) fingerprint: SHA256:dX/5wpWuUxYar2NFuGwiZuRiydiZCyx4DGoZ5/jL/j8 Creating vpc. Adding tag to vpc-6b5b4b0f: Name=kubernetes-vpc Adding tag to vpc-6b5b4b0f: KubernetesCluster=kubernetes Using VPC vpc-6b5b4b0f Adding tag to dopt-8fe770eb: Name=kubernetes-dhcp-option-set Adding tag to dopt-8fe770eb: KubernetesCluster=kubernetes Using DHCP option set dopt-8fe770eb Creating subnet. Adding tag to subnet-623a0206: KubernetesCluster=kubernetes Using subnet subnet-623a0206 Creating Internet Gateway. Using Internet Gateway igw-251eab41 Associating route table. Creating route table Adding tag to rtb-d43cedb3: KubernetesCluster=kubernetes Associating route table rtb-d43cedb3 to subnet subnet-623a0206 Adding route to route table rtb-d43cedb3 Using Route Table rtb-d43cedb3 Creating master security group. Creating security group kubernetes-master-kubernetes. Adding tag to sg-d20ca0ab: KubernetesCluster=kubernetes Creating minion security group. Creating security group kubernetes-minion-kubernetes. Adding tag to sg-cd0ca0b4: KubernetesCluster=kubernetes Using master security group: kubernetes-master-kubernetes sg-d20ca0ab Using minion security group: kubernetes-minion-kubernetes sg-cd0ca0b4 Creating master disk: size 20GB, type gp2 Adding tag to vol-99a30b11: Name=kubernetes-master-pd Adding tag to vol-99a30b11: KubernetesCluster=kubernetes Allocated Elastic IP for master: 52.40.9.27 Adding tag to vol-99a30b11: kubernetes.io/master-ip=52.40.9.27 Generating certs for alternate-names: IP:52.40.9.27,IP:172.20.0.9,IP:10.0.0.1,DNS:kubernetes,DNS:kubernetes.default,DNS:kubernetes.default.svc,DNS:kubernetes.default.svc.cluster.local,DNS:kubernetes-master Starting Master Adding tag to i-f95bdae1: Name=kubernetes-master Adding tag to i-f95bdae1: Role=kubernetes-master Adding tag to i-f95bdae1: KubernetesCluster=kubernetes Waiting for master to be ready Attempt 1 to check for master nodeWaiting for instance i-f95bdae1 to be running (currently pending) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be running (currently pending) Sleeping for 3 seconds... [master running] Attaching IP 52.40.9.27 to instance i-f95bdae1 Attaching persistent data volume (vol-99a30b11) to master 2016-09-29T05:14:28.098Z /dev/sdb i-f95bdae1 attaching vol-99a30b11 cluster "aws_kubernetes" set. user "aws_kubernetes" set. context "aws_kubernetes" set. switched to context "aws_kubernetes". user "aws_kubernetes-basic-auth" set. Wrote config for aws_kubernetes to /Users/arungupta/.kube/config Creating minion configuration Creating autoscaling group 0 minions started; waiting 0 minions started; waiting 0 minions started; waiting 0 minions started; waiting 2 minions started; ready Waiting for cluster initialization. This will continually check to see if the API for kubernetes is reachable. This might loop forever if there was some uncaught error during start up. ..............................................................................................................................................................................................................................Kubernetes cluster created. Sanity checking cluster... Attempt 1 to check Docker on node @ 54.70.225.33 ...working Attempt 1 to check Docker on node @ 54.71.36.48 ...working Kubernetes cluster is running. The master is running at: https://52.40.9.27 The user name and password to use is located in /Users/arungupta/.kube/config. ... calling validate-cluster Waiting for 2 ready nodes. 0 ready nodes, 0 registered. Retrying. Waiting for 2 ready nodes. 0 ready nodes, 0 registered. Retrying. Waiting for 2 ready nodes. 0 ready nodes, 0 registered. Retrying. Waiting for 2 ready nodes. 0 ready nodes, 2 registered. Retrying. Waiting for 2 ready nodes. 0 ready nodes, 2 registered. Retrying. Found 2 node(s). NAME STATUS AGE ip-172-20-0-111.us-west-2.compute.internal Ready 39s ip-172-20-0-112.us-west-2.compute.internal Ready 42s Validate output: NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-0 Healthy {"health": "true"} etcd-1 Healthy {"health": "true"} Cluster validation succeeded Done, listing cluster services: Kubernetes master is running at https://52.40.9.27 Elasticsearch is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/elasticsearch-logging Heapster is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/heapster Kibana is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/kibana-logging KubeDNS is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/kube-dns kubernetes-dashboard is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard Grafana is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/monitoring-grafana InfluxDB is running at https://52.40.9.27/api/v1/proxy/namespaces/kube-system/services/monitoring-influxdb To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. |

Esto muestra que el clúster Kubernetes se ha iniciado correctamente.

Despliegue del servicio Couchbase

Crear el servicio Couchbase y el controlador de replicación:

|

1 2 3 |

kubectl.sh create -f couchbase-service.yml service "couchbase-service" created replicationcontroller "couchbase-rc" created |

El archivo de configuración se encuentra en github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/couchbase-service.yml. Esto crea un servicio Couchbase y

el controlador de replicación de respaldo. El nombre del servicio es servicio couchbase. Esto será utilizado más tarde por la aplicación Spring Boot para comunicarse con la base de datos. Compruebe el estado de los pods:

|

1 2 3 4 5 |

kubectl.sh get -w pods NAME READY STATUS RESTARTS AGE couchbase-rc-gu9gl 0/1 ContainerCreating 0 6s NAME READY STATUS RESTARTS AGE couchbase-rc-gu9gl 1/1 Running 0 2m |

Observe cómo el estado del pod cambia de ContainerCreating a Running. Mientras tanto, la imagen se descarga y se inicia.

Ejecutar aplicación Spring Boot

Ejecuta la aplicación:

|

1 2 |

kubectl.sh create -f bootiful-couchbase.yml pod "bootiful-couchbase" created |

El archivo de configuración se encuentra en github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/bootiful-couchbase.yml. En este servicio,

COUCHBASE_URI se establece en servicio couchbase. Este es el nombre del servicio creado anteriormente. La imagen Docker utilizada para este servicio es arungupta/bootiful-couchbase y se crea utilizando

fabric8-maven-plugin como se muestra en github.com/arun-gupta/kubernetes-java-sample/blob/master/maven/webapp/pom.xml#L57-L68.

En concreto, el comando para la imagen Docker es:

|

1 |

java -Dspring.couchbase.bootstrap-hosts=$COUCHBASE_URI -jar /maven/${project.artifactId}.jar |

Esto garantiza que COUCHBASE_URI anula la variable de entorno spring.couchbase.bootstrap-hosts tal y como se define en aplicación.propiedades de la aplicación Spring Boot. Obtenga los registros:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

kubectl.sh logs -f bootiful-couchbase . ____ _ __ _ _ /\ / ___'_ __ _ _(_)_ __ __ _ ( ( )___ | '_ | '_| | '_ / _` | \/ ___)| |_)| | | | | || (_| | ) ) ) ) ' |____| .__|_| |_|_| |___, | / / / / =========|_|==============|___/=/_/_/_/ :: Spring Boot :: (v1.4.0.RELEASE) 2016-09-29 05:37:29.227 INFO 5 --- [ main] org.example.webapp.Application : Starting Application v1.0-SNAPSHOT on bootiful-couchbase with PID 5 (/maven/bootiful-couchbase.jar started by root in /) 2016-09-29 05:37:29.259 INFO 5 --- [ main] org.example.webapp.Application : No active profile set, falling back to default profiles: default 2016-09-29 05:37:29.696 INFO 5 --- [ main] s.c.a.AnnotationConfigApplicationContext : Refreshing org.springframework.context.annotation.AnnotationConfigApplicationContext@4ccabbaa: startup date [Thu Sep 29 05:37:29 UTC 2016]; root of context hierarchy 2016-09-29 05:37:34.375 INFO 5 --- [ main] c.c.client.core.env.CoreEnvironment : ioPoolSize is less than 3 (1), setting to: 3 2016-09-29 05:37:34.376 INFO 5 --- [ main] c.c.client.core.env.CoreEnvironment : computationPoolSize is less than 3 (1), setting to: 3 2016-09-29 05:37:35.026 INFO 5 --- [ main] com.couchbase.client.core.CouchbaseCore : CouchbaseEnvironment: {sslEnabled=false, sslKeystoreFile='null', sslKeystorePassword='null', queryEnabled=false, queryPort=8093, bootstrapHttpEnabled=true, bootstrapCarrierEnabled=true, bootstrapHttpDirectPort=8091, bootstrapHttpSslPort=18091, bootstrapCarrierDirectPort=11210, bootstrapCarrierSslPort=11207, ioPoolSize=3, computationPoolSize=3, responseBufferSize=16384, requestBufferSize=16384, kvServiceEndpoints=1, viewServiceEndpoints=1, queryServiceEndpoints=1, searchServiceEndpoints=1, ioPool=NioEventLoopGroup, coreScheduler=CoreScheduler, eventBus=DefaultEventBus, packageNameAndVersion=couchbase-java-client/2.2.8 (git: 2.2.8, core: 1.2.9), dcpEnabled=false, retryStrategy=BestEffort, maxRequestLifetime=75000, retryDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=100, upper=100000}, reconnectDelay=ExponentialDelay{growBy 1.0 MILLISECONDS, powers of 2; lower=32, upper=4096}, observeIntervalDelay=ExponentialDelay{growBy 1.0 MICROSECONDS, powers of 2; lower=10, upper=100000}, keepAliveInterval=30000, autoreleaseAfter=2000, bufferPoolingEnabled=true, tcpNodelayEnabled=true, mutationTokensEnabled=false, socketConnectTimeout=1000, dcpConnectionBufferSize=20971520, dcpConnectionBufferAckThreshold=0.2, dcpConnectionName=dcp/core-io, callbacksOnIoPool=false, queryTimeout=7500, viewTimeout=7500, kvTimeout=2500, connectTimeout=5000, disconnectTimeout=25000, dnsSrvEnabled=false} 2016-09-29 05:37:36.063 INFO 5 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-09-29 05:37:36.256 INFO 5 --- [ cb-io-1-1] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-09-29 05:37:37.727 INFO 5 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Connected to Node couchbase-service 2016-09-29 05:37:38.316 INFO 5 --- [-computations-3] c.c.c.core.config.ConfigurationProvider : Opened bucket books 2016-09-29 05:37:40.655 INFO 5 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Registering beans for JMX exposure on startup Book{isbn=978-1-4919-1889-0, name=Minecraft Modding with Forge, cost=29.99} 2016-09-29 05:37:41.497 INFO 5 --- [ main] org.example.webapp.Application : Started Application in 14.64 seconds (JVM running for 16.631) 2016-09-29 05:37:41.514 INFO 5 --- [ Thread-5] s.c.a.AnnotationConfigApplicationContext : Closing org.springframework.context.annotation.AnnotationConfigApplicationContext@4ccabbaa: startup date [Thu Sep 29 05:37:29 UTC 2016]; root of context hierarchy 2016-09-29 05:37:41.528 INFO 5 --- [ Thread-5] o.s.j.e.a.AnnotationMBeanExporter : Unregistering JMX-exposed beans on shutdown 2016-09-29 05:37:41.577 INFO 5 --- [ cb-io-1-2] com.couchbase.client.core.node.Node : Disconnected from Node couchbase-service 2016-09-29 05:37:41.578 INFO 5 --- [ Thread-5] c.c.c.core.config.ConfigurationProvider : Closed bucket books |

La principal sentencia de salida que hay que buscar en esto es

|

1 |

Book{isbn=978-1-4919-1889-0, name=Minecraft Modding with Forge, cost=29.99} |

Esto indica que el documento JSON es upserted (insertado o actualizado) en la base de datos Couchbase.

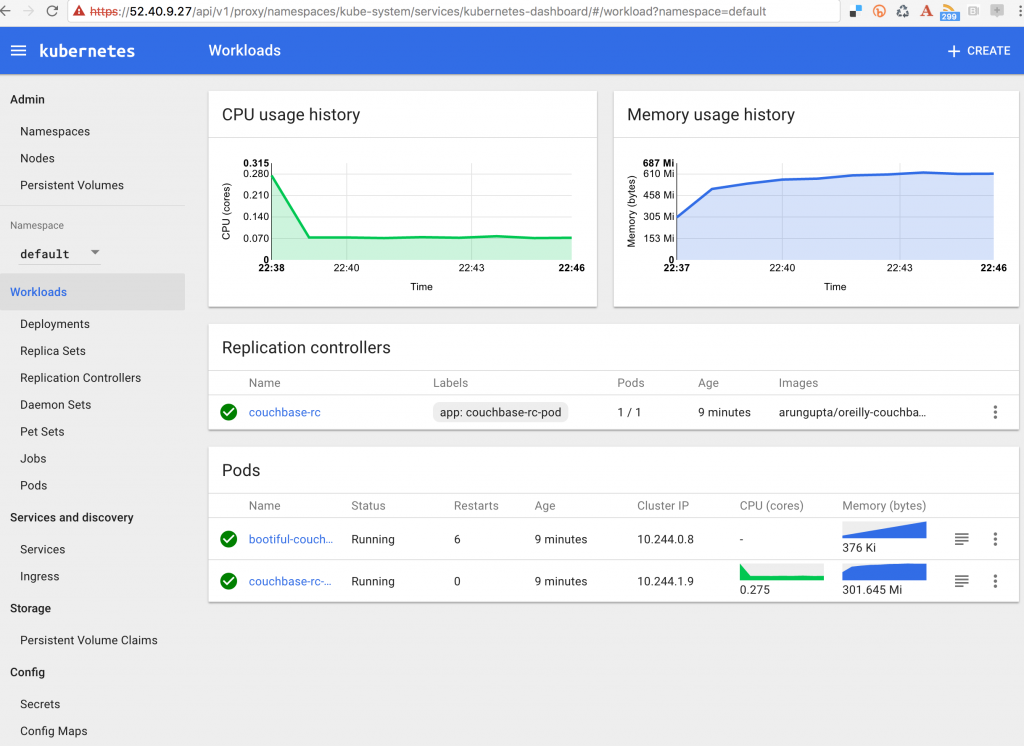

Panel de control de Kubernetes

Panel de control de Kubernetes se ve más completa y afirmó tener 90% paridad con la CLI. Utilice kubectl.sh vista de configuración para ver la información de configuración del clúster. Tiene el aspecto siguiente:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

apiVersion: v1 clusters: - cluster: certificate-authority-data: REDACTED server: https://52.40.9.27 name: aws_kubernetes contexts: - context: cluster: aws_kubernetes user: aws_kubernetes name: aws_kubernetes current-context: aws_kubernetes kind: Config preferences: {} users: - name: aws_kubernetes user: client-certificate-data: REDACTED client-key-data: REDACTED token: 3GuTCLvFnINHed9dWICICidlrSv8C0kg - name: aws_kubernetes-basic-auth user: password: 8pxC121Oj7kN0nCa username: admin |

En clusters.cluster.server muestra la ubicación del maestro Kubernetes. La dirección usuarios muestran dos usuarios que se pueden utilizar para acceder al panel de control. El segundo utiliza autenticación básica y por lo tanto copia la propiedad

nombre de usuario y contraseña valor de la propiedad. En nuestro caso, Dashboard UI es accesible en https://52.40.9.27/ui.

Todos los recursos de Kubernetes se pueden ver fácilmente en este elegante panel.

Apagar el clúster Kubernetes

Por último, apague el clúster Kubernetes:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

kube-down.sh Bringing down cluster using provider: aws Deleting instances in VPC: vpc-6b5b4b0f Deleting auto-scaling group: kubernetes-minion-group-us-west-2a Deleting auto-scaling launch configuration: kubernetes-minion-group-us-west-2a Deleting auto-scaling group: kubernetes-minion-group-us-west-2a Waiting for instances to be deleted Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... Waiting for instance i-f95bdae1 to be terminated (currently shutting-down) Sleeping for 3 seconds... All instances deleted Releasing Elastic IP: 52.40.9.27 Deleting volume vol-99a30b11 Cleaning up resources in VPC: vpc-6b5b4b0f Cleaning up security group: sg-cd0ca0b4 Cleaning up security group: sg-d20ca0ab Deleting security group: sg-cd0ca0b4 Deleting security group: sg-d20ca0ab Deleting VPC: vpc-6b5b4b0f Done |

https://www.couchbase.com/products/cloud/kubernetes proporcionan más detalles sobre cómo ejecutar Couchbase utilizando diferentes marcos de orquestación. Más referencias:

- Foros de Couchbase o StackOverflow

- Síguenos en @couchbasedev o @couchbase

- Más información Servidor Couchbase