Paper

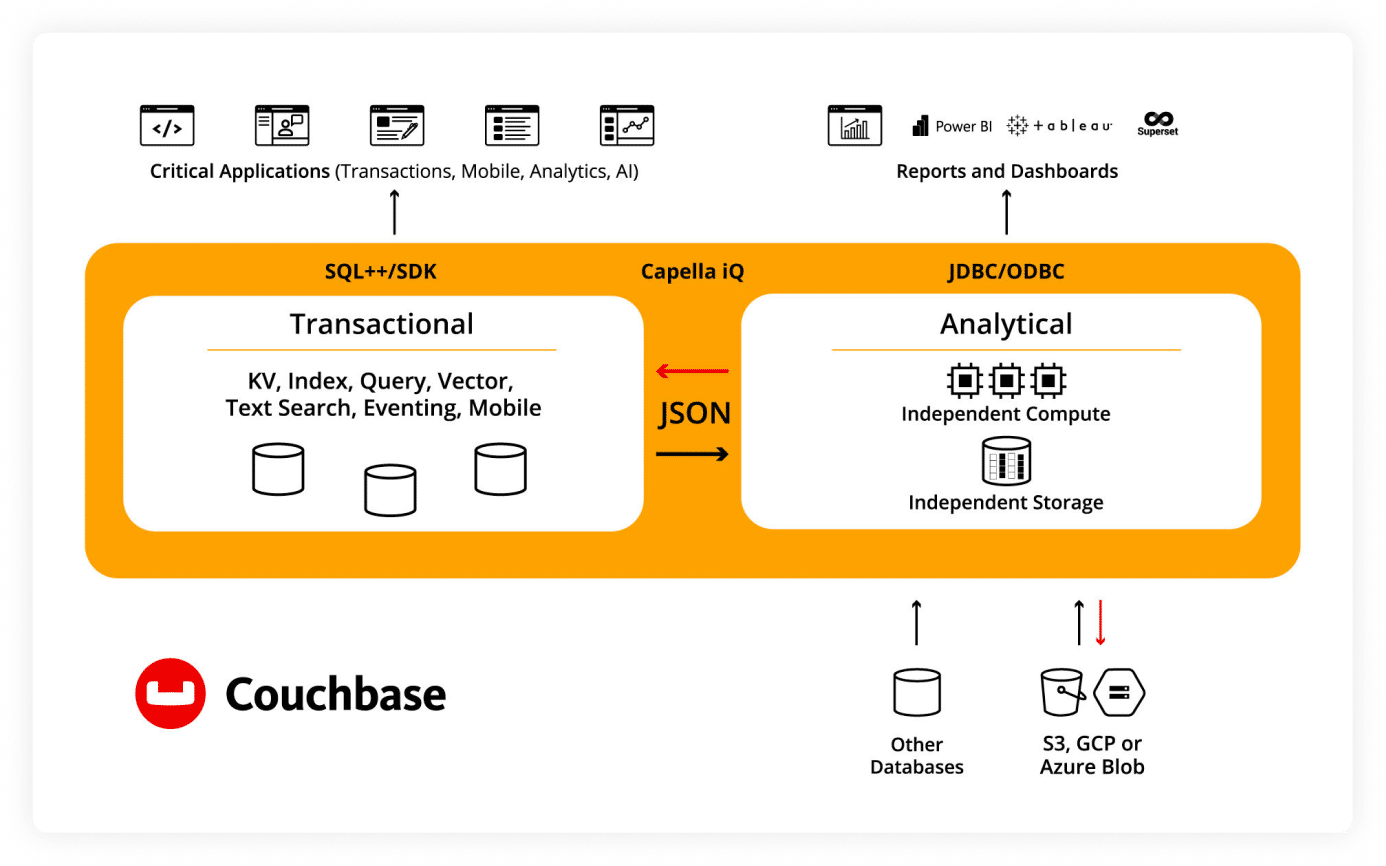

Modern applications rely on operational data to create next-generation experiences, but enhancing them with dynamic analytics data makes them more personalized. Couchbase bridges the difficult data insight gap by converging operational data and real-time analytics in one platform that enables teams to build critical applications that drive real-time experiences, insights, and actions.

Seamlessly perform real-time analysis on JSON data

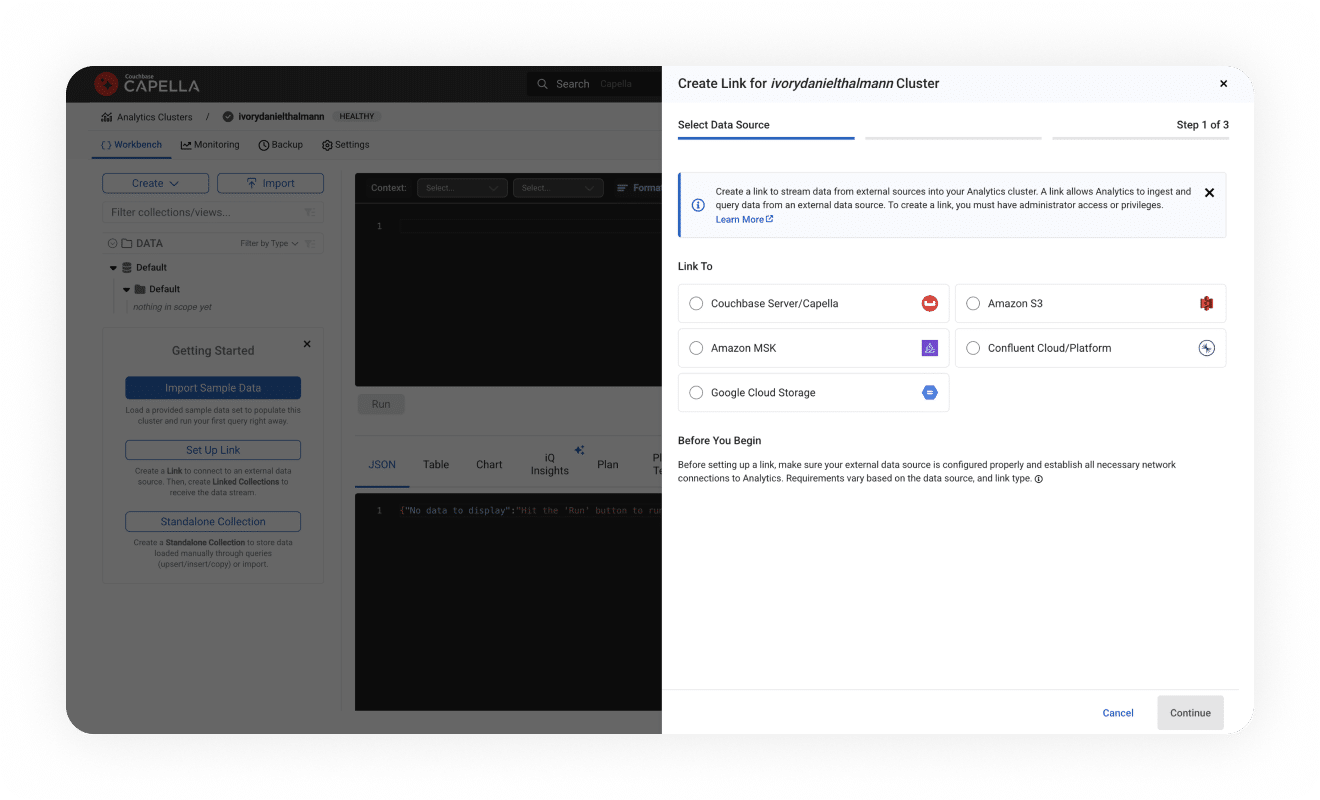

Combine data from multiple databases and flat files for broader analysis.

Perform ad hoc analysis faster, without overwhelming the BI team.

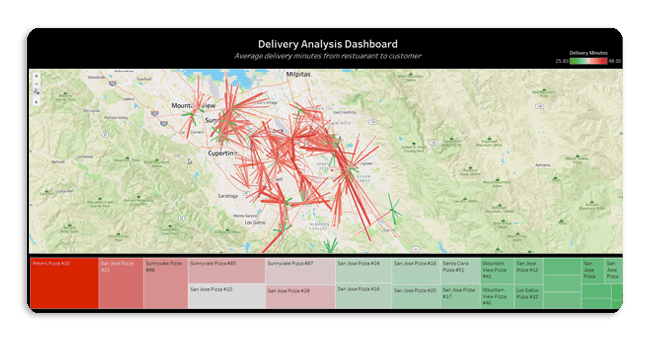

Enhance user experience via derived data and smarter AI responses.

Zero ETL means teams can make informed decisions faster, reacting swiftly to application changes and reducing risk. SQL++ based JSON querying solves the challenges teams have with analytics in relational database management systems.

Developers perform ad hoc analysis faster without needing to define schema and in a conversational manner with Capella iQ.

Utilize real-time metrics to drive action, improve applications, and enhance user experiences.

The only JSON-native data platform for both operations and real-time analytics services. Build robust apps faster, while saving time and costs.