Whether you’re new to Couchbase or a seasoned vet, you’ve likely heard about Scopes and Collections. If you’re ready to try them out for yourself, this article helps you make it happen.

Scopes and Collections are a new feature introduced in the Couchbase Server 7.0 release that allows you to logically organize data within Couchbase. To learn more, read the following introduction to Scopes and Collections.

You should take advantage of Scopes and Collections if you want to map your legacy RDBMS to a document database or if you’re trying to consolidate hundreds of microservices and/or tenants into a single Couchbase cluster (resulting in much lower TCO).

In this article, I’ll go over how you can plan your migration from an older Couchbase version to using Scopes and Collections in Couchbase 7.0.

High-Level Migration Steps

The following are the high level steps of migrating to Scopes and Collections in Couchbase 7.0.

Not all steps are essential: it all depends on your use case and particular technology requirements. I’ll walk you through the details of each of these steps in subsequent sections.

- Upgrade to Couchbase Server 7.0

- Plan your Scopes and Collections strategy: Determine what Buckets, Scopes, Collections and indexes you need. Determine the mapping from old Bucket(s) to new Bucket(s)/Scope(s)/Collections. Write scripts to create Scopes, Collections and indexes.

- Migrate your application code: This application code is your Couchbase SDK code including N1QL queries.

- Data migration: Determine if an offline strategy works for your deployment or if you need an online migration. Take action accordingly.

- Plan and implement your database security strategy: Determine what Users and Role assignments you need. Create scripts to manage these assignments.

- Go live with your new Collections-aware application

- Setup XDCR and setup Backup for your Couchbase database

Upgrade to Couchbase 7

Here’s what you need to know about upgrading to Couchbase Server 7.0:

-

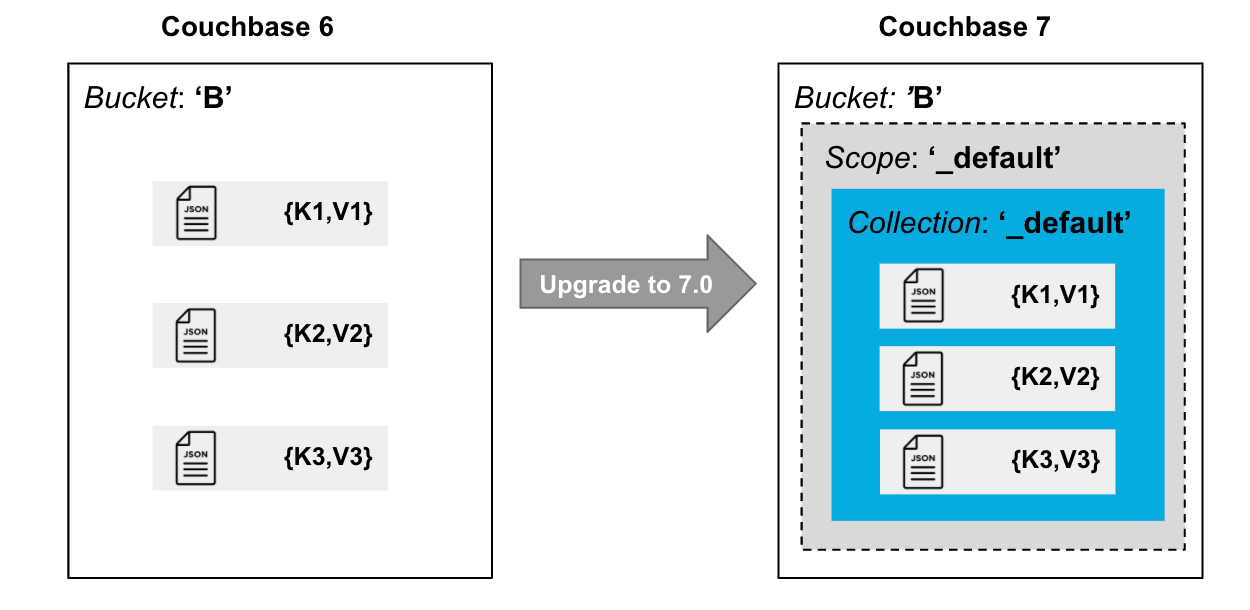

- Every Bucket in 7.0+ has a

_defaultScope with a_defaultCollection in it. - Upgrading to Couchbase 7.0 moves all data in the Bucket to the

_defaultCollection of the Bucket. - There is no impact to existing applications. For example, an SDK 2.7 reference to Bucket

Bautomatically resolves toB._default._default(referencing the_defaultScope and Collection, respectively).

- Every Bucket in 7.0+ has a

The diagram below illustrates how data is organized in Buckets, Scopes and Collections after migrating data from Couchbase 6 to Couchbase 7.

If you do not wish to use named Scopes and Collections, stop right here.

But if you’re ready to use this new data organization feature, read on.

Plan Your Scopes and Collections Strategy

Below are the most common database migration scenarios that I’ve come across. Your migration scenario might be different and mileage may vary.

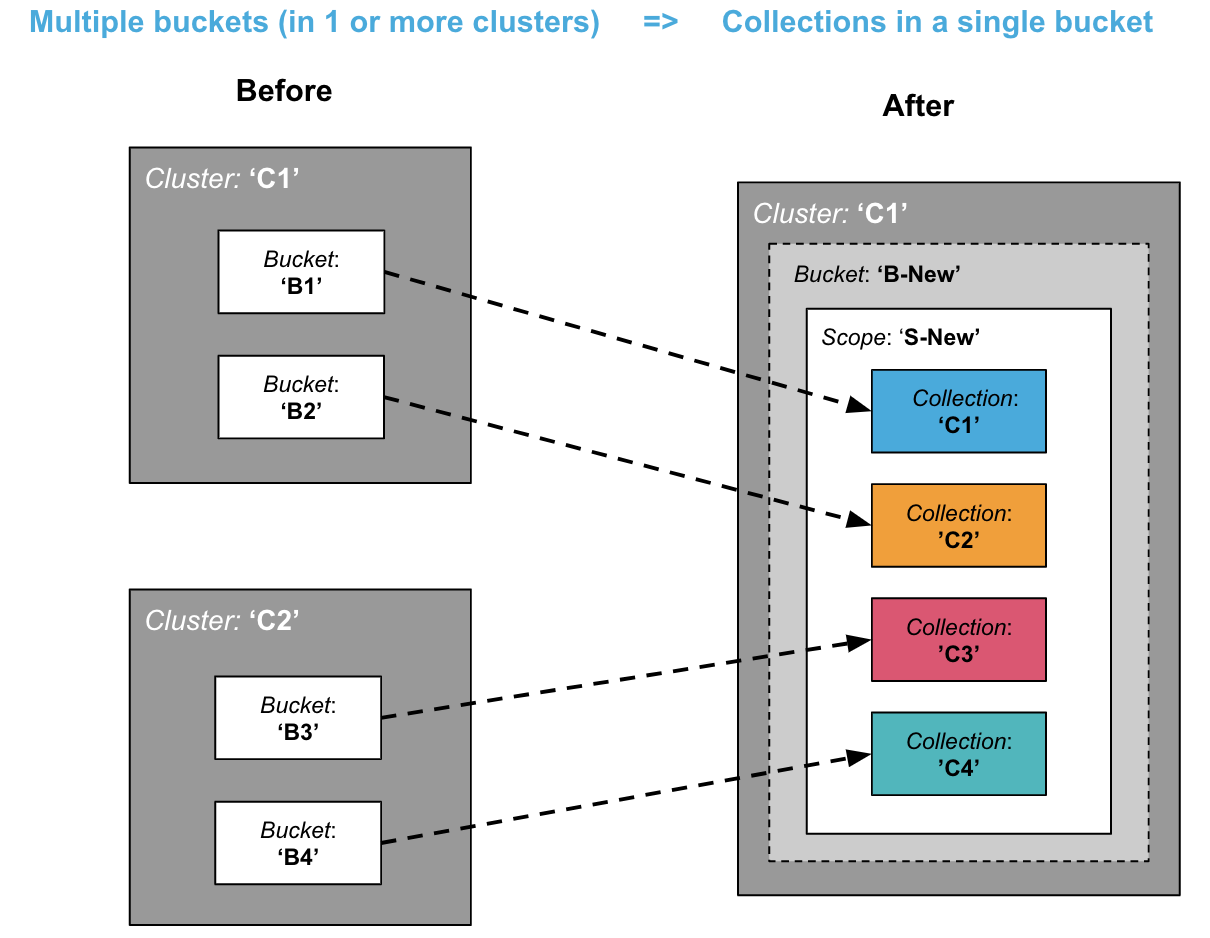

Consolidation: From Multiple Buckets to Collections in a Single Bucket

One common scenario is when you’re trying to lower your total cost of ownership (TCO) by consolidating multiple Buckets into a single Bucket.

A cluster can only have up to 30 Buckets, whereas you can have 1000 Collections per cluster, allowing for much higher density. This is a common scenario for microservices consolidation.

The diagram above shows all target Collections belonging to the same Scope. But you could just as well have the target Collections belong in different Scopes.

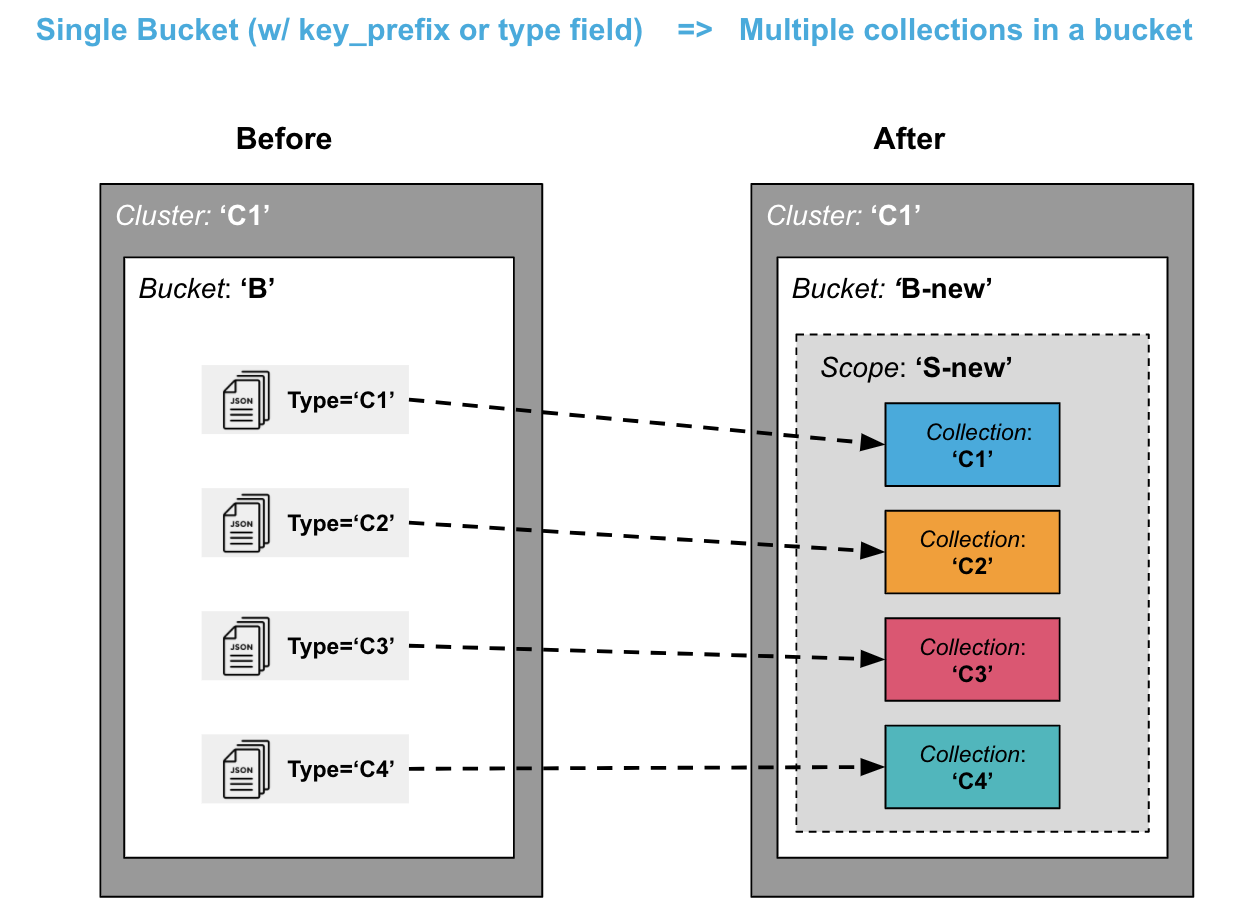

Splitting: From Single Bucket to Multiple Collections in a Bucket

Another common scenario is to migrate the data that resides within a single Bucket and split it out into multiple Collections (within the same Bucket).

You might have previously qualified different types of data with a type = foo field or with a key prefix like foo_key. Now these data types can each live in their own Collection, giving you the advantages of logical isolation, security isolation, replication and access control.

This scenario may be a little more complex than the previous “consolidation” scenario, especially if you want to get rid of the key prefix or type field. For a simpler migration, you may want to leave the key prefixes and type data fields as is, even though they may be somewhat redundant with Collections.

Creation of Scopes, Collections and Indexes

Once you’ve planned what Scopes, Collections and indexes you want to have, you need to create scripts for creation of these entities. You can use the Couchbase SDK of your choice, the couchbase-cli, the REST APIs directly, or even N1QL scripts to do so.

Below is an example of using the CLI (couchbase-cli and cbq shell) to create a Scope, a Collection and an index.

|

1 2 3 4 5 6 7 8 |

// create a Scope called 'myscope' using couchbase-cli ./couchbase-cli collection-manage -c localhost -u Administrator -p password --bucket testBucket --create-scope myscope // create a Collection called mycollection in myscope ./couchbase-cli collection-manage -c localhost -u Administrator -p password --bucket testBucket --create-collection myscope.mycollection // create an index on mycollection using cbq ./cbq --engine=localhost:8093 -u Administrator -p password --script="create index myidx1 on testBucket.myscope.mycollection(field1,field2);" |

Note that the index creation statement does not require you to qualify the data with a type = foo or key-prefix qualification clause anymore.

Migrate Your Application Code

In order to use named Scopes and Collections, your application code (including N1QL queries) needs to be migrated.

If you were using type fields or key prefixes previously (as in the splitting scenario), you will not need them anymore.

SDK Code Sample

In your SDK code, you have to connect to a cluster, open a Bucket and obtain a reference to a Collection object to store and retrieve documents. Prior to Collections, all key-value operations were performed directly on the Bucket.

Note: If you have migrated to Couchbase SDK 3.0, you have already done some of the work of starting to use Collections (though until now you could only use the default Collection).

The following is a simple Java SDK code snippet for storing and retrieving a document to a Collection:

|

1 2 3 4 5 6 7 8 9 10 |

Cluster cluster = Cluster.connect("127.0.0.1", "Administrator", "password"); Bucket bucket = cluster.bucket("bucket-name"); Scope scope = bucket.scope("scope-name"); Collection collection = scope.collection("collection-name"); JsonObject content = JsonObject.create().put("author", "mike"); MutationResult result = collection.upsert("document-key", content); GetResult getResult = collection.get("document-key"); |

N1QL Queries

Now if you want to run a N1QL query on the Collection in the above Java example, do the following:

|

1 2 3 |

//run a N1QL using the context of the Scope scope.query("select * from collection-name"); |

Notice that you can query directly on a Scope. The above query on the Scope object automatically maps to select * from bucket-name.scope-name.collection-name.

Another way to provide path context to N1QL is to set it on QueryOptions. For example:

|

1 2 |

QueryOptions qo = QueryOptions.queryOptions().raw("query_context", "bucket-name.scope-name"); cluster.query("select * from collection-name", qo); |

A Scope may have multiple Collections, and you can join those directly by referencing the Collection name within the Scope. If you need to query across Scopes (or across Buckets), then it’s better to use the cluster object to query.

Note that the N1QL queries no longer need to qualify a type = foo field (or key_prefix qualifier), if applicable.

For example, this old N1QL query…

|

1 2 3 4 5 6 |

SELECT r.destinationairport FROM Travel a JOIN Travel r ON a.faa = r.sourceairport AND r.type = "route" WHERE a.city = "Toulouse" AND a.type = "airport"; |

…now becomes:

|

1 2 3 4 |

SELECT r.destinationairport FROM Airport a JOIN Route r ON a.faa = r.sourceairport WHERE a.city = "Toulouse"; |

Data Migration to Collections

Next, you need to migrate existing data to your new named Scopes and Collections.

The first thing you have to determine is whether you can afford to do an offline migration (where your application is offline for a few hours), or if you need to do a mostly online migration with minimal application downtime.

An offline migration could be faster overall and require fewer extra resources in terms of extra disk space or nodes.

Offline Migration

If you choose to do offline migration, you can use N1QL or Backup/Restore. We’ll take a closer look at both options.

Using N1QL for an Offline Migration

Prerequisite: Your cluster must have spare disk space and be using the Query Service.

Following this approach, your migration would look something like the following:

- Create new Scopes, Collections and indexes.

- Take old application offline.

- For each named Collection:

- Insert-Select from

_defaultCollection to named Collection (using appropriate filters). - Delete data from

_defaultCollection that was migrated in above step (to save space; or if space is not an issue, you can do this at the end).

- Insert-Select from

- Verify your migrated data.

- Drop old Buckets.

- Online your new application.

Using Backup/Restore for an Offline Migration

Prerequisite: You need disk space to store backup files.

With this approach, your migration would look like this:

- Create new Scopes, Collections and indexes

- Take application offline

- Take backup (

cbbackupmgr) of 7.0 cluster - Restore using explicit mapping to named Collections. Use

--filter-keysand--map-data(see examples 1 and 2 below). - Online your new application.

Example 1: No Filtering during Restore

This example moves the entire _default Collection to a named Collection. (This is the likely case for the consolidation scenario).

|

1 2 3 4 5 6 7 8 |

// Backup the default Scope of a Bucket upgraded to 7.0 cbbackupmgr config -a backup -r test-01 --include-data beer-sample._default cbbackupmgr backup -a backup -r test-01 -c localhost -u Administrator -p password // Restore above backup to a named Collection cbbackupmgr restore -a backup -r test-01 -c localhost -u Administrator -p password --map-data beer-sample._default._default=beer-sample.beer-service.service_01 |

Example 2: Restore with Filtering

This example moves portions of _default Collection to different named Collections (This is the likely case for the splitting scenario).

|

1 2 3 4 5 6 7 8 9 10 |

// Backup the travel-sample Bucket from a cluster upgraded to 7.0 cbbackupmgr config -a backup -r test-02 --include-data travel-sample cbbackupmgr backup -a backup -r test-02 -c localhost -u Administrator -p password // Restore type=’airport’ documents to a Collection travel.booking.airport cbbackupmgr restore -a backup -r test-02 -c localhost -u Administrator -p password --map-data travel-sample._default._default=travel.booking.airport --auto-create-buckets --filter-values '"type":"airport"' // Restore key_prefix =’airport’ documents to a Collection travel.booking.airport cbbackupmgr restore -a backup -r test-02 -c localhost -u Administrator -p password --map-data travel-sample._default._default=travel.booking.airport --auto-create-buckets --filter-keys airport_* |

Online Migration Using XDCR

In order to do a mostly online migration, you need to use cross data center replication (XDCR).

Depending on your spare capacity in the existing cluster, you can do self-XDCR (where the source and destination Bucket are on the same cluster), or you can set up a separate cluster to replicate to.

Here are the steps you need to follow:

- Setup XDCR from yoursource cluster to your target cluster (you can do self-XDCR if you have spare disk space and compute resources on the original cluster).

- Create new Buckets, Scopes and Collections.

- Set up replications either directly from a Bucket to a

bucket.scope.collectionor using Migration Mode (details shown below) if a single Bucket’s default Collection has to be split into multiple Collections. - Explicit mapping rules are specifiable for each destination to specify subset of the data.

- Once replication destinations are caught up, offline your old application.

- Online your new application directing it to the new cluster (or new Bucket if using self-XDCR).

- Delete your old cluster (or your old Bucket if using self-XDCR).

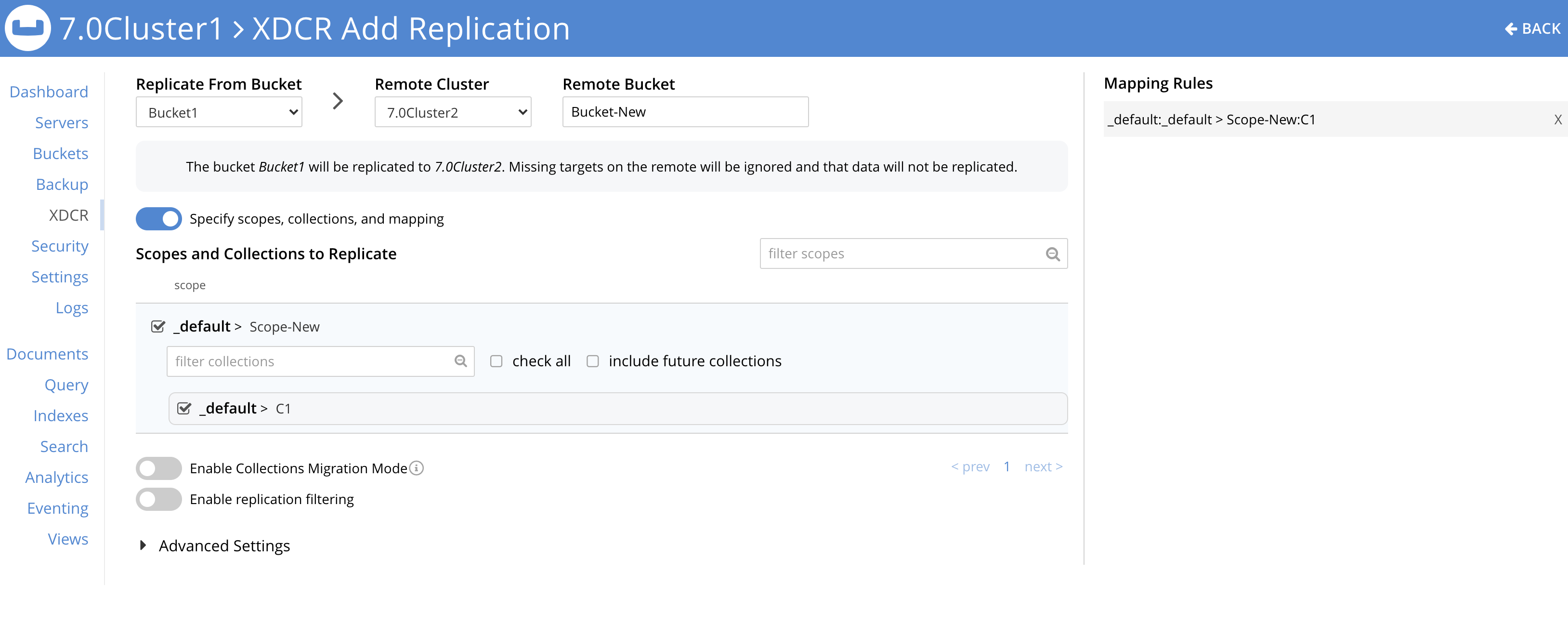

Using XDCR to Migrate from Multiple Buckets to a Single Bucket

These steps are for the consolidation scenario.

The XDCR set up will look something like the following:

-

- For each source Bucket, set up a replication to the named Collection in the destination Bucket and Scope

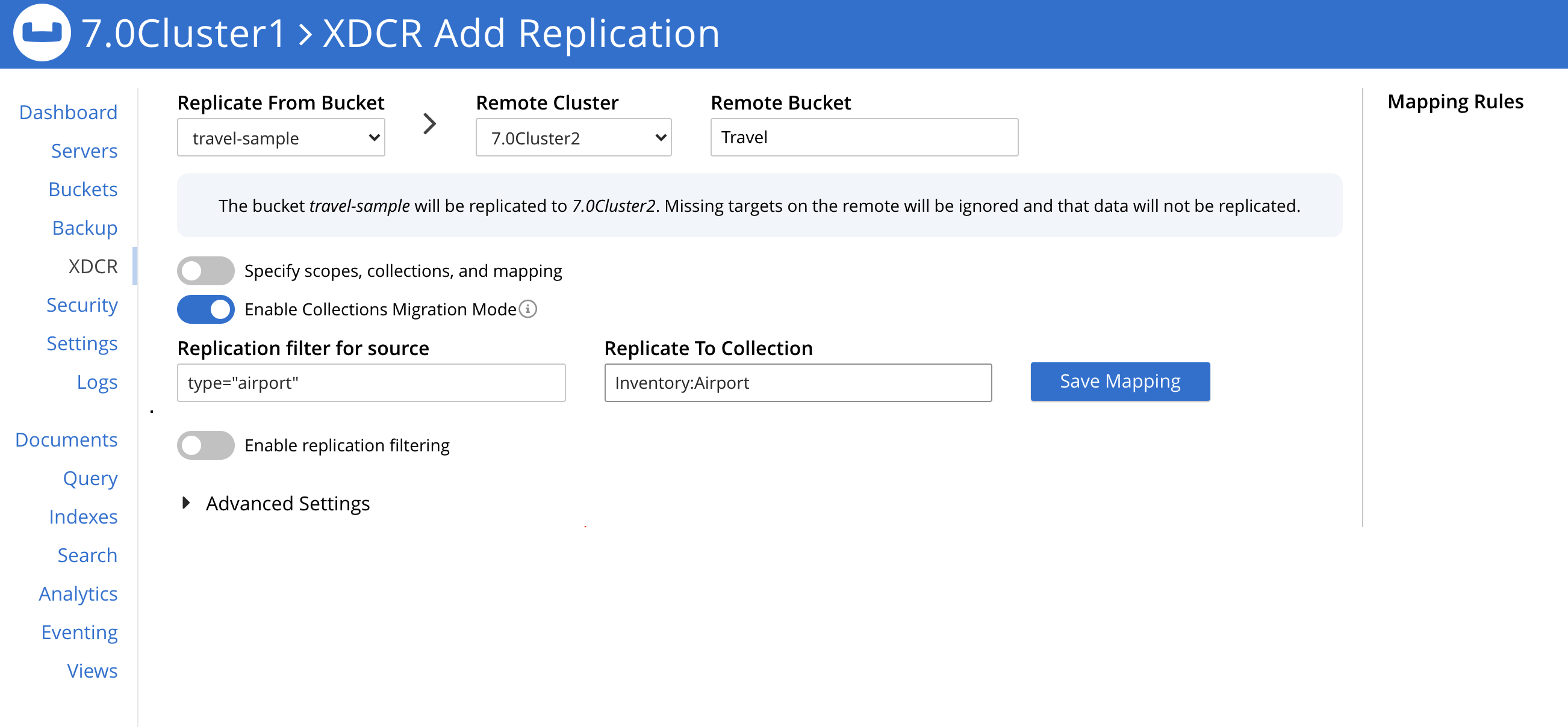

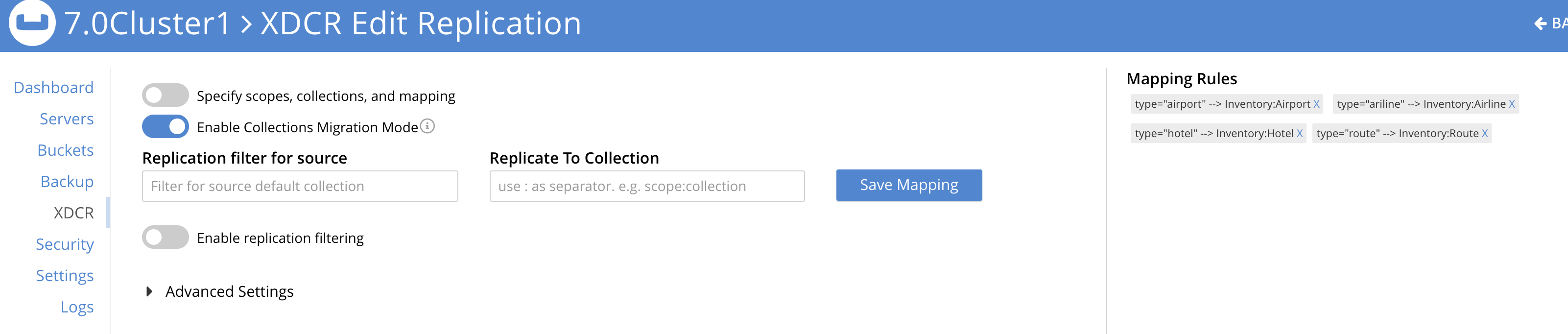

The following screenshot shows the XDCR set up for one source Bucket:

Using XDCR to Split to Multiple Collections from within a Single Bucket

These steps are for the splitting scenario.

In order to map the source _default Collection to multiple target Collections, you should use the Migration Mode provided by XDCR.

The XDCR screens below show Migration Mode being used:

There are four filters you need to set up: (Travel-sample._default._default is the source. A new Bucket called Travel is the target.)

-

- filter

type="airport", replicate toInventory:Airport - filter

type="airline", replicate toInventory:Airline - filter

type="hotel", replicate toInventory:Hotel - filter

type="route", replicate toInventory:Route

- filter

Plan & Implement Your Database Security Strategy

Now that you have all your data in named Scopes and Collections, you have finer control over what data you can assign privileges to. Previously you could do so only at Bucket level.

For more information on role-based access control (RBAC) security for Scopes and Collections, read this article: Introducing RBAC Security for Collections or consult the documentation on RBAC.

The following roles are available at Scope and Collection level in Couchbase 7.

Admin Roles:

-

- Scope Admin role is available at the Scope level. A Scope admin can administer Collections in their Scope.

Data Reader Roles:

-

- Data Reader

- Data Writer

- Data DCP Reader

- Data Monitoring

Query Roles:

-

- FTS Searcher

- Query Select

- Query Update

- Query Insert

- Query Delete

- Query Manage Index

- Query Manage Functions

- Query Execute Functions

Conclusion

I hope this guide helps you successfully migrate to Scopes and Collections in Couchbase 7.

For more information on the 7.0 release, check out the What’s New documentation or peruse the release notes.

What do you think of the new Scopes and Collections feature? I look forward to hearing your feedback on the Couchbase Forums.

Dig into Couchbase 7 today

@Shivani, Is it possible to configure the scope and collections to be sync’d in the sync gateway? I would like to restrict the documents sync’d only of a particular scope/collection?

Sync Gateway support will come a later. So with 7.0 you can also receive documents in the default collection using Sync Gateway.

Sorry typo above. I meant with 7.0 you can only receive documents in the default collection using Sync Gateway.

I planned to categorize a multi-tenant application using scope and collections. I would have liked sync gateway to allow syncing based on collections or scopes. When can we expect sync gateway support?

Couple of more questions

1. Can the same document be part of default collection and another custom collection?

2. Can multiple scope refer to default collection?

The timeframe for Sync Gateway support is TBD.

1) The ‘same’ document in two different collections (default and custom, or two different custom) is essentially two different documents. If you are asking whether the same document key can be used in two different collections, then the answer is Yes.

2) I don’t understand this question. The default collection only exists in the default scope. No other scope has a default collection. As a user you cannot create a default collection (it is created by Couchbase only).

@Shivani, is there any update about availability of scope&collection support in Sync Gateway?

As user @GaneshN, we are designing multi-tenant application using Couchbase server 7.0 and we need to implement client syncing based on that feature.

GaneshN post is almost 1 year old, I really hope in good news.