Today we’re pleased to announce significant improvements in Full-Text Search (FTS) in Couchbase Server 4.6. This blog describes what’s new for search in 4.6:

- Performance Improvements

- Index Type Mapping By Key

- Custom Sorting

Couchbase Server FTS runs seamlessly across your cluster and provides search capabilities similar to Elasticsearch. FTS “just works” distributed – you don’t have to do anything special to run mulit-node search distributed across your cluster – you manage it the same way users have come to expect from Couchbase Server. For example, you can add hardware, rebalance, and Couchbase Server distributes indexes across the cluster so that the newly provisioned nodes start handling search workload. This is part of the goal: to make simple search simple, for both developers and admins.

Note that FTS is in developer preview and will remain in developer preview even after Couchbase Server 4.6 goes GA. We invite you to try it out and share your feedback.

https://www.couchbase.com/blog/2016/november/introducing-couchbase-server-4.6.0-developer-preview

Faster Search

You’ll notice that search is speedier and more efficient with resources in 4.6 compared to earlier releases. This release brings improvements throughout the system, both in FTS itself and in bleve, the full-text search and indexing Go library that powers FTS.

The biggest single contributor to performance improvements is MossStore, the new default kv store mechanism for full text indexes in FTS. MossStore is part of Moss (“Memory-oriented sorted segments”), a simple, fast, persistable, ordered key value collection implemented as a pure Golang library. Moss improves query and especially indexing performance by sorting index segments in memory before persisting them. MossStore has a key advantage over generic KV stores that that segments are always sorted so MossStore doesn’t have to re-sort. MossStore is recommended for all use cases.

Even more performance enhancements are on the way for the next release. Stay tuned!

Index Type Mapping by Key

You can now create custom index mappings using the document key to determine the type. Index mapping is the process of specifying the rules for making documents searchable. In full text search, developers usually specify different index mappings for different document types. For example, you might want to index the “city” field, but only for documents of type “hotel” and not for documents of type “landmark”. In previous releases, this only worked if type was set by an attribute in the document JSON; in 4.6 you can also use a portion of the document key to determine the document type, for example, the prefix up to the “::” for keys like “hotel::1234”.

With this enhancement, it’s easier to support the common data modeling style in which the document type is indicated by a portion of the key, for example, “user::will.gardella”. Generally speaking, this type identifier is going to be a prefix of the key, so FTS gives you an easy option to just specify the prefix without messing with regular expressions. On the other hand, you can do regular expressions if you have a more complex key design, like an infix.

You can try this out on the travel-sample bucket that ships with Couchbase Server. The travel-sample data model follows the belt and suspenders principle: the document type is indicated redundantly both in a JSON type attribute AND in the prefix of the document key.

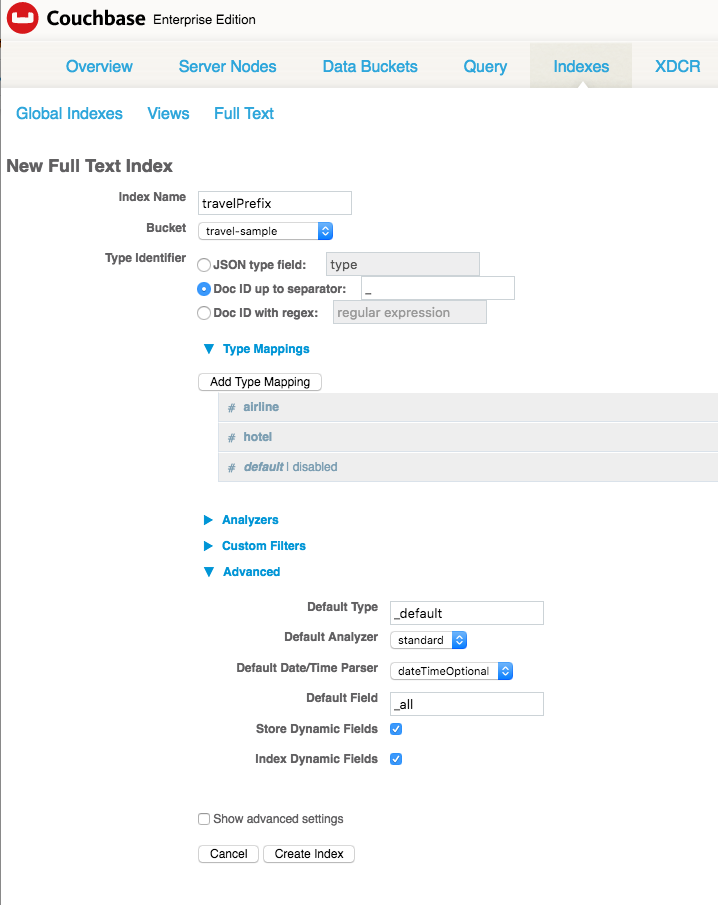

Here’s a step by step example, assuming you have travel-sample bucket installed. Let’s create an index that will let our users search hotels and airlines. A bit of a strange thing to do, but let’s roll with it.

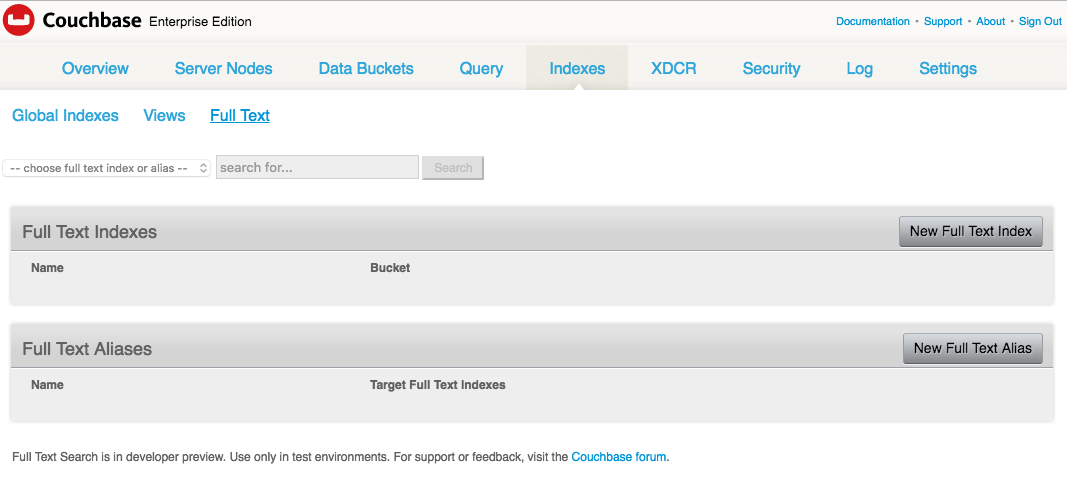

First, go to your web admin (e.g. https://localhost:8091/) > indexes > full text indexes > new full text index. This URL might take you there directly, depending on where you run you server: https://127.0.0.1:8091/ui/index.html#/fts_new/?indexType=fulltext-index&sourceType=couchbase.

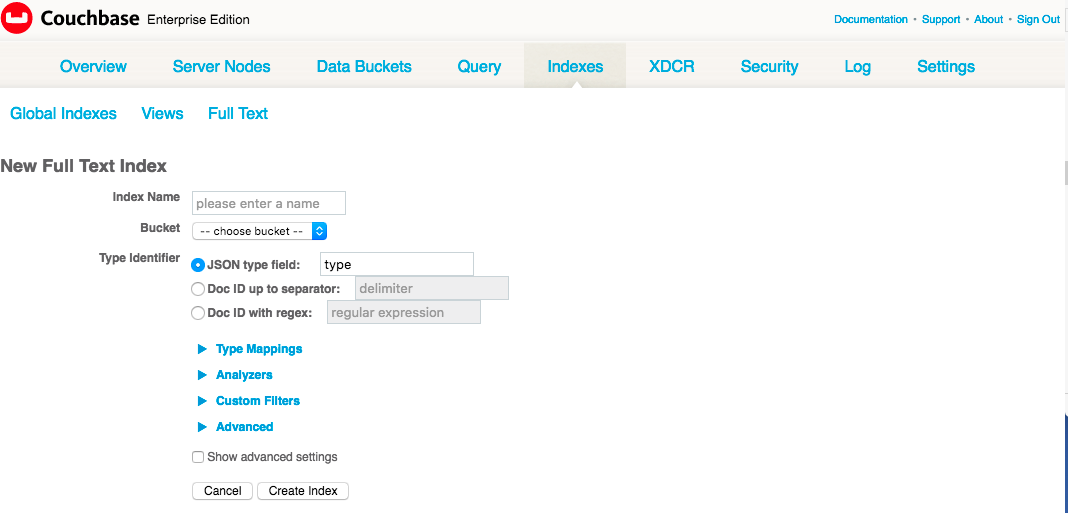

You’ll see a new “Type identifier” field. The old behavior is the default, namely, look at the document body for a field called “type” that indicates the document type. In this case, you’re going to use the id from the document metadata instead.

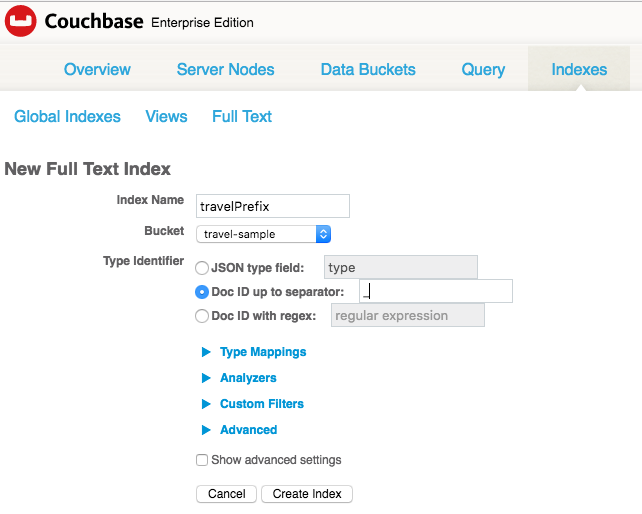

Click the checkbox next to Doc ID up to separator. Enter underscore ‘_’. This instructs FTS to parse the document keys up to the underscore and compare those prefixes to whatever strings you enter into “Type mapping”. It’s important to note that this step only tells FTS where to look for the type of the document, it doesn’t declare any index mapping rules yet. That’s what we’re going to do next.

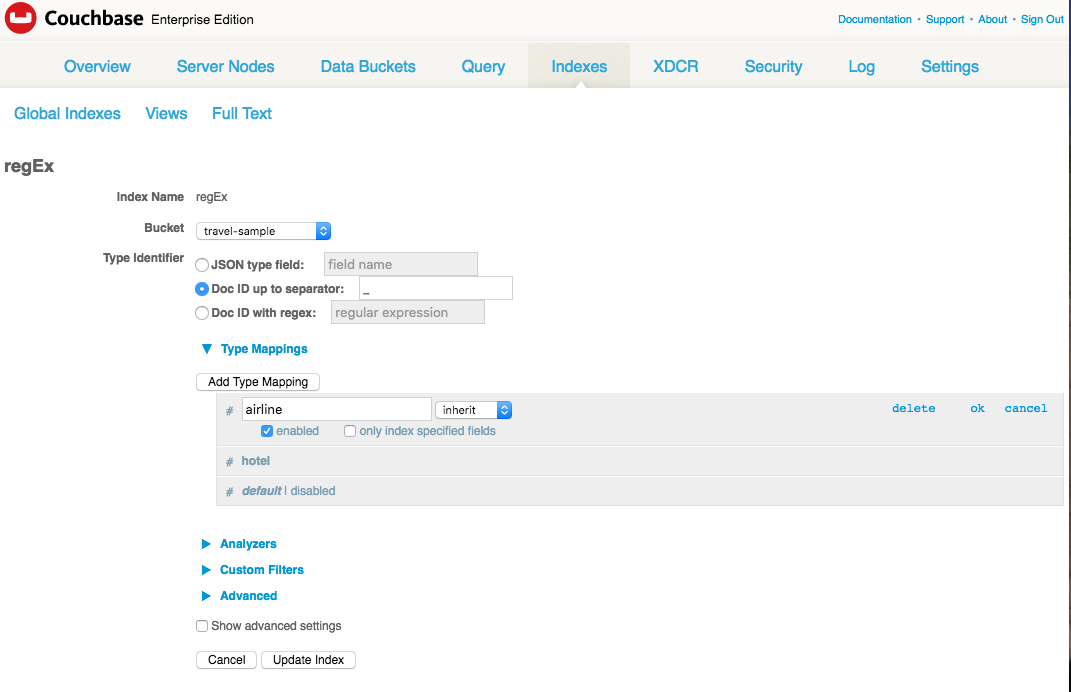

Click “Add Type Mapping”. Add one type “airline” and one type “hotel”. Don’t forget to disable the default mapping, otherwise you’re going to get everything in your index, not just airlines and hotels.

Finally, because this is just a test, unfold “Advanced” by clicking the triangle, and check “Store Dynamic Fields”. This will not only index every field of every airline and hotel document, it will also store what it indexes in the full text index so that it can be highlighted and retrieved as snippets. This makes for a nicer demo, but it fattens up your index and slows everything down accordingly. For this demo it shouldn’t make much difference.

When you’re all done, the index definition will look like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 |

{ "type": "fulltext-index", "name": "travelPrefix", "sourceType": "couchbase", "sourceName": "travel-sample", "planParams": { "maxPartitionsPerPIndex": 32, "numReplicas": 0, "hierarchyRules": null, "nodePlanParams": null, "pindexWeights": null, "planFrozen": false }, "params": { "doc_config": { "docid_prefix_delim": "_", "mode": "docid_prefix" }, "mapping": { "default_analyzer": "standard", "default_datetime_parser": "dateTimeOptional", "default_field": "_all", "default_mapping": { "display_order": "2", "dynamic": true, "enabled": false }, "default_type": "_default", "index_dynamic": true, "store_dynamic": true, "type_field": "type", "types": { "airline": { "display_order": "0", "dynamic": true, "enabled": true }, "hotel": { "display_order": "1", "dynamic": true, "enabled": true } } }, "store": { "kvStoreName": "mossStore" } }, "sourceParams": { "clusterManagerBackoffFactor": 0, "clusterManagerSleepInitMS": 0, "clusterManagerSleepMaxMS": 2000, "dataManagerBackoffFactor": 0, "dataManagerSleepInitMS": 0, "dataManagerSleepMaxMS": 2000, "feedBufferAckThreshold": 0, "feedBufferSizeBytes": 0 } } |

Notice that your newly created JSON index definition has a field in the “params” object call “doc_config”, which is new in 4.6. This field, “doc_config”, is also an object with two fields “mode” and “type_field” or “mode”, depending on whether you’re mapping based on Doc ID or type field .

Index Mapping by type field:

|

1 2 3 4 |

"doc_config": { "mode": "type_field", "type_field": "type" } |

Index Mapping by Doc ID (prefix up to):

|

1 2 3 4 |

"doc_config": { "docid_prefix_delim": "_", "mode": "docid_prefix" } |

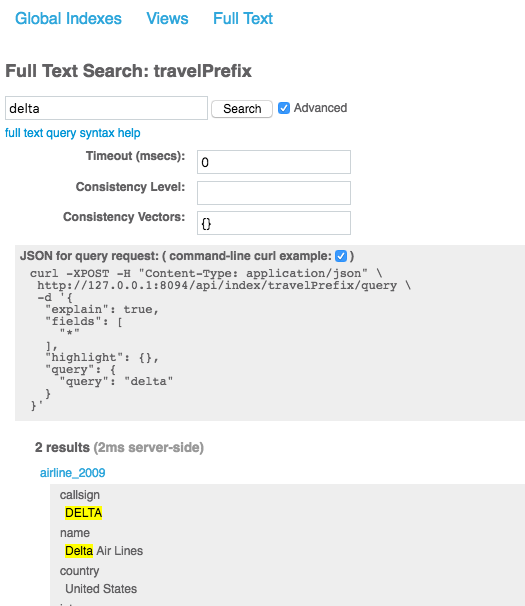

You can test out your shiny new index by performing a query string query for “delta”, which happens to match exactly one airline and one hotel. (I know, convenient, right?). Your query JSON will look like this if using the REST API, or you can use the search box in the Web Admin to do the same search.

|

1 2 3 4 5 6 7 8 9 10 |

{ "explain": true, "fields": [ "*" ], "highlight": {}, "query": { "query": "delta" } } |

Bonus tip:

You can get the query JSON from the web admin by clicking “Advanced” option to the right of the search input field, and then clicking the box for command-line curl example.

Sorting

Couchbase Server 4.6 introduces the ability to sort search results by any field in the document, as long as that field is also indexed. In earlier releases, and by default in 4.6, search results are sorted by descending score so that the most relevant results are listed first.

To use custom sort order, you pass a “sort” field with an array of strings in your query, where each string refers to the name of a field you want to sort on. Using the travelPrefix index we created in earlier step, you can sort by name like this:

|

1 2 3 4 5 6 7 8 9 10 11 |

{ "explain": false, "fields": [ "title" ], "highlight": {}, "sort": ["name"], "query":{ "query": "beautiful pool" } } |

You can prefix any field name with the ‘-’ character, which causes that field to sorted in descending order. So, the sort field from the previous example would look like this:

|

1 |

"sort": ["-name"] |

If you pass an array of field names, results will first be sorted by the first field. Items with the same value for that field are then sorted by the next field, and so on.

You can use two special fields that work for all documents:

‘_id’ – refers to the document key

‘_score’ – refers to the relevance score computed by Bleve

Here’s another example. In this one, the documents are sorted by score, descending, and id if there’s a tie.

|

1 |

"sort": ["-_score",”_id”] |

Here is a more complex example that you could use to sort your hotel search results:

|

1 |

"sort": ["country", “state”, "city","-_score"] |

Results will first be sorted by country. If documents have the same value for country, they will be sorted by state, and then, if country and state are the same, matches will be sorted by city. Finally, if all other fields are equal, results will be sorted by score descending. In this example, you’ll get a list of results grouped according to geography and sorted by score at the city level.

Feedback welcome

As always, we like to hear from you. Many thanks for all the input you’ve shared; the generosity of the community and early adopters has a big influence on the direction of the product. Happy searching!