What can a database do to help an oil and gas company lower rising prices at the pump? It turns out, a lot!

In this post, I explore some data-related factors of rapidly increasing energy costs and how Couchbase can help address them. With gas prices in the US cresting $6 per gallon at the time of this writing (costing me over $60 to fill my tiny hybrid), I became highly motivated to explore the topic in depth.

A recent Wall Street Journal article explained that the oil and gas industry crisis of 2020, which saw COVID-19 induced demand disruption and a supply glut drive down prices, has arguably bounced back. With demand increasing, and the war in Ukraine threatening access to foreign supply, crude oil has jumped above $100 a barrel. But here in the US, oil production has remained below 12 million barrels a day for the past two years, 10% to 15% below pre-pandemic levels.

The article goes on to ask: “So why aren’t American oil and gas companies producing more barrels to help tamp down oil and gasoline prices during a global market shock when domestic inflation is rampant?”. While the answer is complicated and multi-faceted, I’ll explain how an oil and gas company can dramatically increase production by changing how they manage their data.

Too much data, too little time

There’s a fascinating McKinsey & Co. study on “Why oil and gas companies must act on analytics”. While it was published a while ago, I think it is more relevant than ever. The authors explain that information gaps and an inability to take full advantage of data and analytics contribute to inefficiency and lower production in oil and gas companies.

The article cites the inherently complex nature of production and processing facilities as the most significant source of the performance gap. Consider an offshore oil platform with three or four control room operators, continuously fed data from thousands of sensors on downhole, subsea and topside equipment. Operators have to control a multitude of variables, each with different settings, and at the same time consider things like wave heights, temperature and humidity.

Conclusion: there is so much information coming so fast that it becomes a barrier to efficiency that companies cannot overcome with traditional SCADA systems, simulation tools, and training.

The lack of efficiency brings a substantial impact. McKinsey benchmarks revealed that the typical offshore platform runs at approximately just 77% of its maximum production potential because of a lack of insight into things such as equipment conditions and their likelihood of failure. Industry-wide, this shortfall adds up to 10 million barrels per day; imagine what that extra production might do to bring down prices at the pump!

Extend processing to the edge

So, how should an oil and gas company address the shortfall? They should start with data—something every energy company has plenty of. By leveraging data and analytics, including predictive analytics and machine learning, platform operators can better monitor the parameters critical for production and gain insight into likely future conditions or failures, allowing them to perform more quickly and effectively, increasing overall efficiency.

Access to real-time data and advanced analytics can bridge performance gaps and have a substantial positive impact on operations, contributing to higher production. But the notion of leveraging data to improve efficiency presents challenges, not the least of which is data volume.

Oil facilities generate tremendous amounts of data, and that data needs to be captured, stored and analyzed at the source to truly optimize operations – but with so much data, and the need for immediate answers, how do you do that? Some turn to the cloud, which has the storage and processing power to handle lots of data and churn out predictions. But when you operate in remote locales like the North Sea, or in zero internet environments like underground, you can’t rely on the cloud for split-second, mission-critical decisions, especially when massive amounts of data need to be processed.

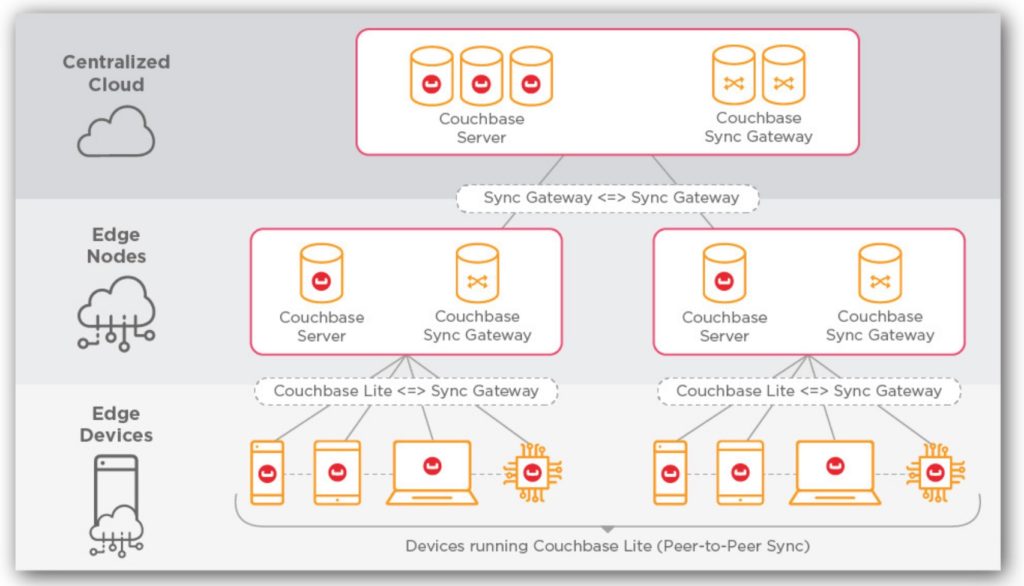

Edge computing is the answer. Edge computing is an architectural approach that puts the data center on-premises—literally on the drilling platform, in the oil field, on the vessel, or wherever the edge happens to be, even directly on personnel’s handheld devices. This brings processing closer to where the data is produced, speeding up applications and making them more reliable by reducing dependencies on the internet.

Edge computing is not about eliminating the cloud, which still serves as an ultimate central data center, it’s about extending the processing of data to the edge through a distributed architecture.

A Forbes article on edge computing in oil and gas made the point that there are four key benefits of edge computing for oil and gas operations—better privacy, reduced bandwidth, lower latency and higher guarantees of reliability.

Edge computing ensures that private data stays private by processing it locally, sensitive data never has to leave the edge. Local processing also reduces bandwidth costs because less data is being pushed to the cloud. Further, latency for applications is dramatically reduced by processing and analyzing data locally. And finally, edge computing brings better reliability for applications because they are able to operate even without internet connectivity.

Edge-ready database

The concept of edge computing is simple: process data closer to where it is produced. This makes apps speedier and more available, but your database choice is critical for success.

In a distributed cloud-to-edge architecture, all layers need a consistent understanding of captured data. At the same time, any layer should be able to run as a self-contained partition if connectivity is lost. As such, the database must be able to distribute its storage and processing from the cloud to the edge. Additionally, the database must instantly replicate and synchronize data across database instances, whether in the cloud, in an edge data center or on a device.

Furthermore, database synchronization must be controllable to provide an optimal data flow throughout the edge architecture. For example, high-velocity data captured from sensors can be processed and analyzed on the oil platform, but (for network bandwidth efficiency) only aggregated data is synchronized to the cloud when connectivity permits.

Lastly, the database must be embeddable, allowing it to run directly on edge devices for sub-millisecond responsiveness and zero downtime.

Oil and gas companies looking to adopt edge computing should only consider using an edge-ready database that can provide these critical capabilities.

More efficiency = higher production

Edge computing allows applications in oil and gas facilities to run reliably and fast even when the internet is slow, intermittent or completely unavailable. This combination of speed and reliability allows handling more data more quickly, driving real-time analysis that helps oil and gas operations run more efficiently, which is the key to higher production.

Because more production can lead to lower prices at the pump, I can hardly wait to experience these impacts myself!

Resources

Here are the articles referenced in this post:

- Why U.S. Oil and Gas Producers Aren’t Solving the Energy Crisis (WSJ)

- Why oil and gas companies must act on analytics (McKinsey)

- Moving To The Edge Is Crucial For Oil And Gas Companies To Make Better Use Of Data (Forbes)

Want to learn more about how Couchbase addresses each of these issues? Here are some online resources to read and explore: